A new automated method to prepare digital photos for analysis will help wildlife researchers who depend on photographs to identify individual animals by their unique markings. A wildlife biologist from Penn State teamed up with scientists from Microsoft Azure, a cloud computing service, using machine learning technology to improve how photographs are turned into usable data for wildlife research.

A paper describing the new technique appears online in the journal Ecological Informatics.

“Many researchers need to identify and collect data on specific individuals in their work, for example, to estimate survival, reproduction and movement,” said Derek Lee, associate research professor of biology at Penn State and principal scientist of the Wild Nature Institute. “Instead of tags and other human-applied markings that could interfere with the animal’s behavior, many researchers take photographs of the animal’s unique markings. We have pattern recognition software to help analyze these photos, but the photos all have to be manually prepared for analysis. Because we often have thousands of photos to go thorough, this creates a serious bottleneck.”

Lee uses photographs as part of a large ongoing study to understand births, deaths and the movement of more than 3,000 giraffes in East Africa. He and his team take digital photographs of each animal’s unique and unchanging spot patterns to identify them throughout their lives. But before the images can be processed by pattern recognition software to identify individuals, the research team has to manually crop each photo or delineate an area of interest.

Lee collaborated with scientists from Microsoft, who have provided a new image processing service to automate this essential and time-consuming process using machine learning technology deployed on the Microsoft Azure cloud.

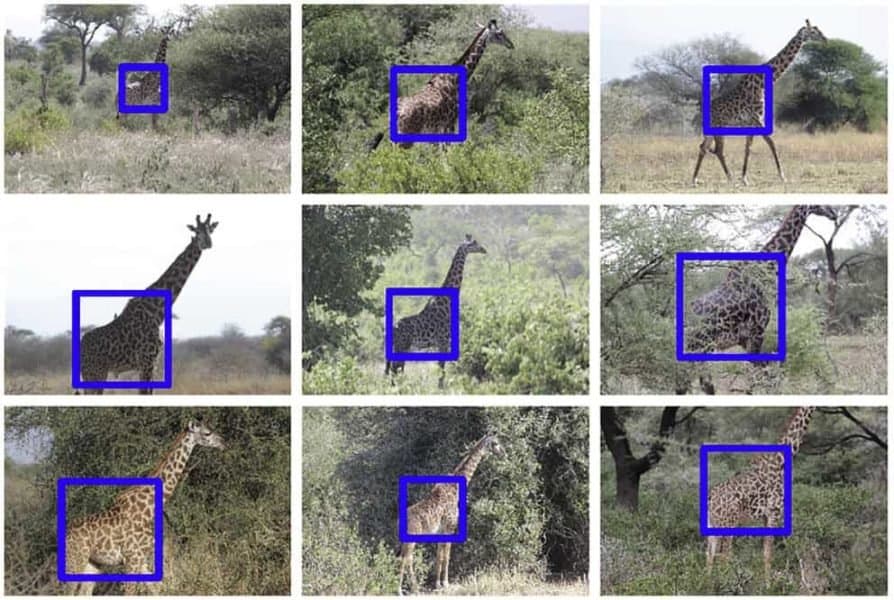

Using a computer algorithm for object detection, the Microsoft team trained a program to recognize giraffe torsos using existing photos that had been annotated by hand. The program was iteratively improved using an active learning process, where the system showed predicted cropping squares on new images to a human who could quickly verify or correct the results. These new images were then fed back into the training algorithm to further update and improve the program. The resulting system identifies —with a very high accuracy — the location of giraffe torsos in images, even when the giraffe is a small portion of the image or its torso is partially blocked by vegetation.

“The system achieves near-perfect recognition of giraffe torsos without expensive hardware requirements like a dedicated, high-powered, graphics processing unit,” said Lee. “It is wonderful how the Azure team automated this tedious aspect of our work. It used to take us a week to process our new images after a survey, and now it is done in minutes. This system moves us closer to fully automatic animal identification from photos.”

The new system will dramatically accelerate Lee’s research on giraffe populations, which have rapidly declined across Africa due to habitat loss and illegal killing for meat.

“Giraffe are big animals, and they cover big distances, so naturally we are using big data to learn where they are doing well, where they are not, and why, so we can protect and connect the areas important to giraffe conservation,” said Lee. “We needed new tools to accomplish this, and there was a harmonic conjunction with the Azure technology that made our work possible.”

This process will also be useful to researchers studying other animals with unique identifying patterns — including some wild cats, elephants, salamanders, fish, penguins and marine mammals. The system could be trained to identify and crop a photo to a region of interest specific to these species.

In addition to Lee, the research team includes Patrick Buehler, Bill Carroll, Ashish Bhatia and Vivek Gupta at Microsoft Azure. This work was funded in part by the Sacramento Zoo, Columbus Zoo, Cincinnati Zoo, Safari West, Tierpark Berlin, and Tulsa Zoo.