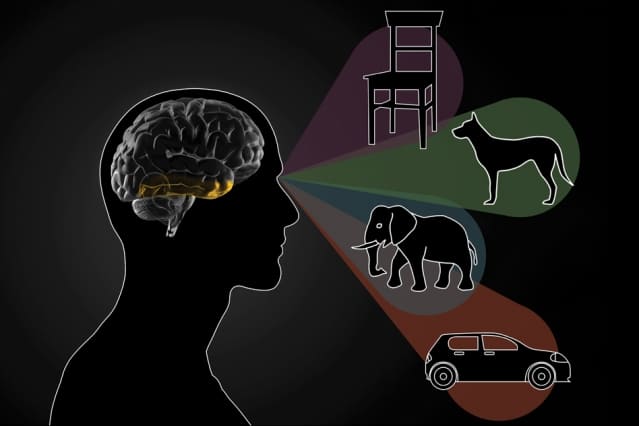

As visual information flows into the brain through the retina, the visual cortex transforms the sensory input into coherent perceptions. Neuroscientists have long hypothesized that a part of the visual cortex called the inferotemporal (IT) cortex is necessary for the key task of recognizing individual objects, but the evidence has been inconclusive.

In a new study, MIT neuroscientists have found clear evidence that the IT cortex is indeed required for object recognition; they also found that subsets of this region are responsible for distinguishing different objects.

In addition, the researchers have developed computational models that describe how these neurons transform visual input into a mental representation of an object. They hope such models will eventually help guide the development of brain-machine interfaces (BMIs) that could be used for applications such as generating images in the mind of a blind person.

“We don’t know if that will be possible yet, but this is a step on the pathway toward those kinds of applications that we’re thinking about,” says James DiCarlo, the head of MIT’s Department of Brain and Cognitive Sciences, a member of the McGovern Institute for Brain Research, and the senior author of the new study.

Rishi Rajalingham, a postdoc at the McGovern Institute, is the lead author of the paper, which appears in the March 13 issue of Neuron.

Distinguishing objects

In addition to its hypothesized role in object recognition, the IT cortex also contains “patches” of neurons that respond preferentially to faces. Beginning in the 1960s, neuroscientists discovered that damage to the IT cortex could produce impairments in recognizing non-face objects, but it has been difficult to determine precisely how important the IT cortex is for this task.

The MIT team set out to find more definitive evidence for the IT cortex’s role in object recognition, by selectively shutting off neural activity in very small areas of the cortex and then measuring how the disruption affected an object discrimination task. In animals that had been trained to distinguish between objects such as elephants, bears, and chairs, they used a drug called muscimol to temporarily turn off subregions about 2 millimeters in diameter. Each of these subregions represents about 5 percent of the entire IT cortex.

These experiments, which represent the first time that researchers have been able to silence such small regions of IT cortex while measuring behavior over many object discriminations, revealed that the IT cortex is not only necessary for distinguishing between objects, but it is also divided into areas that handle different elements of object recognition.

The researchers found that silencing each of these tiny patches produced distinctive impairments in the animals’ ability to distinguish between certain objects. For example, one subregion might be involved in distinguishing chairs from cars, but not chairs from dogs. Each region was involved in 25 to 30 percent of the tasks that the researchers tested, and regions that were closer to each other tended to have more overlap between their functions, while regions far away from each other had little overlap.

“We might have thought of it as a sea of neurons that are completely mixed together, except for these islands of “face patches.” But what we’re finding, which many other studies had pointed to, is that there is large-scale organization over the entire region,” Rajalingham says.

The features that each of these regions are responding to are difficult to classify, the researchers say. The regions are not specific to objects such as dogs, nor easy-to-describe visual features such as curved lines.

“It would be incorrect to say that because we observed a deficit in distinguishing cars when a certain neuron was inhibited, this is a ‘car neuron,’” Rajalingham says. “Instead, the cell is responding to a feature that we can’t explain that is useful for car discriminations. There has been work in this lab and others that suggests that the neurons are responding to complicated nonlinear features of the input image. You can’t say it’s a curve, or a straight line, or a face, but it’s a visual feature that is especially helpful in supporting that particular task.”

Bevil Conway, a principal investigator at the National Eye Institute, says the new study makes significant progress toward answering the critical question of how neural activity in the IT cortex produces behavior.

“The paper makes a major step in advancing our understanding of this connection, by showing that blocking activity in different small local regions of IT has a different selective deficit on visual discrimination. This work advances our knowledge not only of the causal link between neural activity and behavior but also of the functional organization of IT: How this bit of brain is laid out,” says Conway, who was not involved in the research.

Brain-machine interface

The experimental results were consistent with computational models that DiCarlo, Rajalingham, and others in their lab have created to try to explain how IT cortex neuron activity produces specific behaviors.

“That is interesting not only because it says the models are good, but because it implies that we could intervene with these neurons and turn them on and off,” DiCarlo says. “With better tools, we could have very large perceptual effects and do real BMI in this space.”

The researchers plan to continue refining their models, incorporating new experimental data from even smaller populations of neurons, in hopes of developing ways to generate visual perception in a person’s brain by activating a specific sequence of neuronal activity. Technology to deliver this kind of input to a person’s brain could lead to new strategies to help blind people see certain objects.

“This is a step in that direction,” DiCarlo says. “It’s still a dream, but that dream someday will be supported by the models that are built up by this kind of work.”

The research was funded by the National Eye Institute, the Office of Naval Research, and the Simons Foundation.