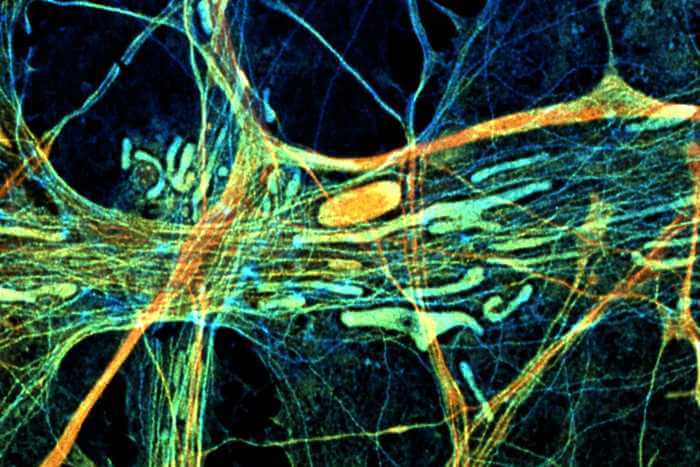

The brain is a complex network containing billions of neurons. Each of these neurons communicates simultaneously with thousands of others via their synapses (links), and collects incoming signals through several extremely long, branched “arms”, called dendritic trees.

For the last 70 years a core hypothesis of neuroscience has been that brain learning occurs by modifying the strength of the synapses, following the relative firing activity of their connecting neurons. This hypothesis has been the basis for machine and deep learning algorithms which increasingly affect almost all aspects of our lives. But after seven decades, this long-lasting hypothesis has now been called into question.

In an article published today in Scientific Reports, researchers from Bar-Ilan University in Israel reveal that the brain learns completely differently than has been assumed since the 20th century. The new experimental observations suggest that learning is mainly performed in neuronal dendritic trees, where the trunk and branches of the tree modify their strength, as opposed to modifying solely the strength of the synapses (dendritic leaves), as was previously thought. These observations also indicate that the neuron is actually a much more complex, dynamic and computational element than a binary element that can fire or not. Just one single neuron can realize deep learning algorithms, which previously required an artificial complex network consisting of thousands of connected neurons and synapses.

“We’ve shown that efficient learning on dendritic trees of a single neuron can artificially achieve success rates approaching unity for handwritten digit recognition. This finding paves the way for an efficient biologically inspired new type of AI hardware and algorithms,” said Prof. Ido Kanter, of Bar-Ilan’s Department of Physics and Gonda (Goldschmied) Multidisciplinary Brain Research Center, who led the research. “This simplified learning mechanism represents a step towards a plausible biological realization of backpropagation algorithms, which are currently the central technique in AI,” added Shiri Hodassman, a PhD student and one of the key contributors to this work.

The efficient learning on dendritic trees is based on Kanter and his research team’s experimental evidence for sub-dendritic adaptation using neuronal cultures, together with other anisotropic properties of neurons, like different spike waveforms, refractory periods and maximal transmission rates.

The brain’s clock is a billion times slower than existing parallel GPUs, but with comparable success rates in many perceptual tasks.

The new demonstration of efficient learning on dendritic trees calls for new approaches in brain research, as well as for the generation of counterpart hardware aiming to implement advanced AI algorithms. If one can implement slow brain dynamics on ultrafast computers, the sky is the limit.