If human eyes came in a package, it would have to be labeled “Natural product. Some variation may occur.” Because the million-plus retinal ganglion cells that send signals to the human brain for interpretation don’t all perform exactly the same way.

They are what an engineer would call ‘noisy’ — there is variance between cells and from one moment to the next. And yet, when we see a photograph of a beautiful flower, it looks sharp and colorful and we know what it is.

The brain’s visual centers must be adept at filtering out the noise from the retinal cells to get to the true signal, and those filters have to constantly adapt to light conditions to keep the signal clear. Prosthetic retinas and neural implants are going to need this same kind of adaptive noise-filtering to succeed, new research suggests.

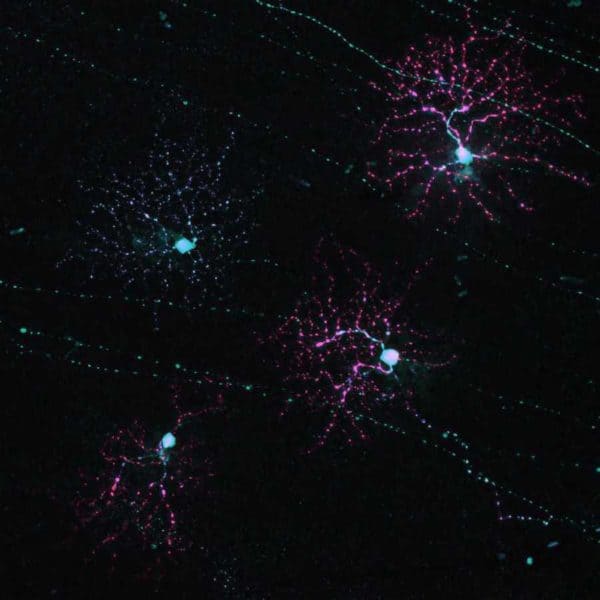

“Neurons in the brain are noisy — meaning that when the same stimulus is presented, the neurons do not produce the same response each time,” said Greg Field, an assistant professor of neurobiology at Duke University, who has coauthored a new study in Nature Communications with a Canadian colleague on how the brain compensates for visual noise.

“If brain-machine interfaces do not account for noise correlations among neurons, they are likely to perform poorly,” Field said.

Working in a special darkroom inside Field’s laboratory, Duke graduate student Kiersten Ruda exposed small squares of living rat retina to patterns and videos under varying light conditions while an array of more than 500 tiny electrodes beneath the retinal cells recorded the signals that are normally sent down the optic nerve to the brain.

“All of this is done in total darkness with the aid of night vision goggles, so that we can preserve the peak sensitivity of the retinas,” Field said.

The researchers ran those nerve impulses through software instead of a brain to see how variable and noisy the signals were, and to experiment with what kind of filtering the brain would need to achieve a clear signal under different light conditions, like moonlight versus sunlight.

Sensory systems like the eyes, nose and ears work on populations of sensors, because each individual sensing cell is noisy. However, this noise is shared or ‘correlated’ across the cells, which presents a challenge for the brain to understand the original signal.

At high light levels, the computer could improve decoding by about 20 percent by using the noise correlations, as opposed to just assuming each neuron was noisy in its own way. But at low light levels, this value increased to 100%.

Indeed, earlier research by other groups has found that assuming uncorrected noise in the cortex of the brain can make decoding 30 percent worse. Field’s research in the retina shows these assumptions lead to even larger losses of information.

“It helps to understand this correlated noise if you think of an orchestra,” Fields said in an email. “All the members of the orchestra are playing a little out of tune, that’s the ‘noise,’ but how out-of-tune they are depends on their neighbors, that’s the correlation. For example, all the violins are playing a little sharp, while the flutes are a little flat, and the cellos are very flat. A major question in neuroscience has been the extent to which this correlated noise corrupts the ability of the brain to figure out what song is being played.”

“We showed that to utilize the benefit of having lots of sensory cells, the brain must know how to filter out this correlated noise,” Field said. But the problem is even more complex because the amount of correlated noise depends on the amount of light, with more noise at lower light levels like moonlight.

Aside from being difficult and fascinating observations, Field said the study’s findings point to the challenges ahead for engineers who would hope to replicate the retina in a prosthetic or in the sort of neural implant Elon Musk has announced.

“To make an ideal retinal prosthetic (a bionic eye), it would probably need to incorporate these noise correlations for the brain to correctly interpret the signals it receives from the prosthetic,” Field said. Similarly, computers that readout brain activity from neural implants will probably need to have a model of noise correlations among neurons.

But it won’t be easy. Field said the researchers have yet to understand the structure of these noise correlations across the brain.

“If the brain were to assume that the noise is independent across neurons, instead of having an accurate model of how it is correlated, we show the brain would suffer from a catastrophic loss of information about the stimulus,” Field said.

This research was funded by the U.S. National Institutes of Health, the National Eye Institute, Whitehead Scholars Award, the A.P. Sloan Foundation, Canada Research Chairs program and the Natural Science and Engineering Research Council of Canada.

CITATION: “Ignoring Correlated Activity Causes a Failure of Retinal Population Codes,” Kiersten Ruda, Joel Zylberberg, Greg Field. Nature Communications, Sept. 14, 2020. DOI: 10.1038/s41467-020-18436-2