Vast amounts of data related to climate change are being compiled by research groups all over the world. Data from these many and varied sources results in different climate projections; hence, the need arises to combine information across data sets to arrive at a consensus regarding future climate estimates.

In a paper published last December in the SIAM Journal on Uncertainty Quantification, authors Matthew Heaton, Tamara Greasby, and Stephan Sain propose a statistical hierarchical Bayesian model that consolidates climate change information from observation-based data sets and climate models.

“The vast array of climate data—from reconstructions of historic temperatures and modern observational temperature measurements to climate model projections of future climate—seems to agree that global temperatures are changing,” says author Matthew Heaton. “Where these data sources disagree, however, is by how much temperatures have changed and are expected to change in the future. Our research seeks to combine many different sources of climate data, in a statistically rigorous way, to determine a consensus on how much temperatures are changing.”

Using a hierarchical model, the authors combine information from these various sources to obtain an ensemble estimate of current and future climate along with an associated measure of uncertainty. “Each climate data source provides us with an estimate of how much temperatures are changing. But, each data source also has a degree of uncertainty in its climate projection,” says Heaton. “Statistical modeling is a tool to not only get a consensus estimate of temperature change but also an estimate of our uncertainty about this temperature change.”

The approach proposed in the paper combines information from observation-based data, general circulation models (GCMs) and regional climate models (RCMs).

Observation-based data sets, which focus mainly on local and regional climate, are obtained by taking raw climate measurements from weather stations and applying it to a grid defined over the globe. This allows the final data product to provide an aggregate measure of climate rather than be restricted to individual weather data sets. Such data sets are restricted to current and historical time periods. Another source of information related to observation-based data sets are reanalysis data sets in which numerical model forecasts and weather station observations are combined into a single gridded reconstruction of climate over the globe.

GCMs are computer models which capture physical processes governing the atmosphere and oceans to simulate the response of temperature, precipitation, and other meteorological variables in different scenarios. While a GCM portrayal of temperature would not be accurate to a given day, these models give fairly good estimates for long-term average temperatures, such as 30-year periods, which closely match observed data. A big advantage of GCMs over observed and reanalyzed data is that GCMs are able to simulate climate systems in the future.

RCMs are used to simulate climate over a specific region, as opposed to global simulations created by GCMs. Since climate in a specific region is affected by the rest of the earth, atmospheric conditions such as temperature and moisture at the region’s boundary are estimated by using other sources such as GCMs or reanalysis data.

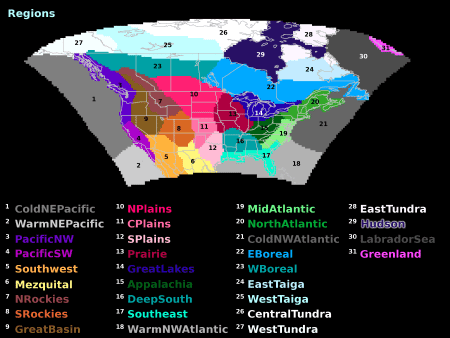

By combining information from multiple observation-based data sets, GCMs and RCMs, the model obtains an estimate and measure of uncertainty for the average temperature, temporal trend, as well as the variability of seasonal average temperatures. The model was used to analyze average summer and winter temperatures for the Pacific Southwest, Prairie and North Atlantic regions (seen in the image above)—regions that represent three distinct climates. The assumption would be that climate models would behave differently for each of these regions. Data from each region was considered individually so that the model could be fit to each region separately.

“Our understanding of how much temperatures are changing is reflected in all the data available to us,” says Heaton. “For example, one data source might suggest that temperatures are increasing by 2 degrees Celsius while another source suggests temperatures are increasing by 4 degrees. So, do we believe a 2-degree increase or a 4-degree increase? The answer is probably ‘neither’ because combining data sources together suggests that increases would likely be somewhere between 2 and 4 degrees. The point is that that no single data source has all the answers. And, only by combining many different sources of climate data are we really able to quantify how much we think temperatures are changing.”

While most previous such work focuses on mean or average values, the authors in this paper acknowledge that climate in the broader sense encompasses variations between years, trends, averages and extreme events. Hence the hierarchical Bayesian model used here simultaneously considers the average, linear trend and interannual variability (variation between years). Many previous models also assume independence between climate models, whereas this paper accounts for commonalities shared by various models—such as physical equations or fluid dynamics—and correlates between data sets.

“While our work is a good first step in combining many different sources of climate information, we still fall short in that we still leave out many viable sources of climate information,” says Heaton. “Furthermore, our work focuses on increases/decreases in temperatures, but similar analyses are needed to estimate consensus changes in other meteorological variables such as precipitation. Finally, we hope to expand our analysis from regional temperatures (say, over just a portion of the U.S.) to global temperatures.”

To read other SIAM Nuggets, explaining current high level research involving applications of mathematics in popular science terms, go to http://connect.siam.org/category/siam-nuggets/.