Artificial intelligence (AI) and machine learning tools have received a lot of attention recently, with the majority of discussions focusing on proper use. However, this technology has a wide range of practical applications, from predicting natural disasters to addressing racial inequalities and now, assisting in cancer surgery.

A new clinical and research partnership between the UNC Department of Surgery, the Joint UNC-NCSU Department of Biomedical Engineering, and the UNC Lineberger Comprehensive Cancer Center has created an AI model that can predict whether or not cancerous tissue has been fully removed from the body during breast cancer surgery. Their findings were published in Annals of Surgical Oncology.

“Some cancers you can feel and see, but we can’t see microscopic cancer cells that may be present at the edge of the tissue removed. Other cancers are completely microscopic,” said senior author Kristalyn Gallagher, DO, section chief of breast surgery in the Division of Surgical Oncology and UNC Lineberger member. “This AI tool would allow us to more accurately analyze tumors removed surgically in real-time, and increase the chance that all of the cancer cells are removed during the surgery. This would prevent the need to bring patients back for a second or third surgery.”

During surgery, the surgeon will resect the tumor (also referred to as a specimen) and take a small amount of surrounding healthy tissue in an attempt to remove all of the cancer in the breast. The specimen is then photographed using a mammography machine and reviewed by the team to make sure the area of abnormality was removed. It is then sent to pathology for further analysis.

The pathologist can determine whether cancer cells extend to the specimen’s outer edge, or pathological margin. If cancer cells are present on the edge of the tissue removed, there is a chance that additional cancer cells still remain in the breast. The surgeon might have to perform additional surgery to remove additional tissue to ensure the cancer has been completely removed. However, this can take up to a week after surgery to process fully, while specimen mammography, or photographing the specimen with an X-ray, can be done immediately in the operating room.

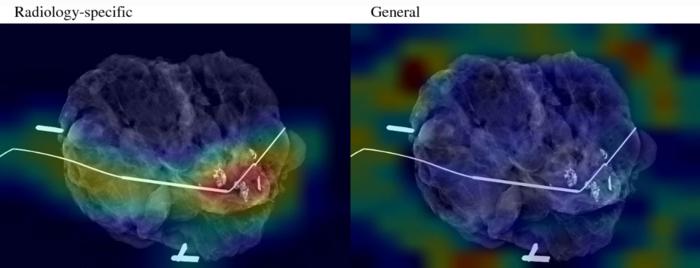

To “teach” their AI model what positive and negative margins look like, researchers used hundreds of these specimen mammogram images, matched with the final specimen reports from pathologists. To help their model, the researchers also gathered demographic data from patients, such as age, race, tumor type, and tumor size.

After calculating the model’s accuracy in predicting pathologic margins, researchers compared that data to the typical accuracy of human interpretation and discovered that the AI model performed as well as humans, if not better.

“It is interesting to think about how AI models can support doctor’s and surgeon’s decision making in the operating room using computer vision,” said first author Kevin Chen, MD, general surgery resident in the Department of Surgery. “We found that the AI model matched or slightly surpassed humans in identifying positive margins.”

According to Gallagher, the model can be especially helpful in discerning margins in patients that have higher breast density. On mammograms, higher density breast tissue and tumors appear as a bright white color, making it difficult to discern where the cancer ends and the healthy breast tissue begins.

Similar models could also be especially helpful for hospitals with fewer resources, which may not have the specialist surgeons, radiologists, or pathologists on hand to make a quick, informed decision in the operating room.

“It is like putting an extra layer of support in hospitals that maybe wouldn’t have that expertise readily available,” said Shawn Gomez, EngScD, professor of biomedical engineering and pharmacology and co-senior author on the paper. “Instead of having to make a best guess, surgeons could have the support of a model trained on hundreds or thousands of images and get immediate feedback on their surgery to make a more informed decision.”

Since the model is still in its early stages, researchers will keep adding more pictures taken by more patients and different surgeons. The model will need to be validated in further studies before it can be used clinically. Researchers anticipate that the accuracy of their models will increase over time as they learn more about the appearance of normal tissue, tumors, and margins.