The robot’s arm descends toward a pale, flat rock. Twelve centimeters. Eight. Five. A foam ring makes contact, a time-of-flight sensor confirms the distance, and a UV light flickers on inside the instrument housing as the microscope locks focus. The image appears on a screen across the room: gypsum, its crystalline texture mapped at millimeter scale, veins threading through the surface like a fragment of deep geological time. The whole sequence took roughly a minute. Then the arm lifts, the four-legged robot turns, and it walks to the next target without waiting for anyone to tell it to.

This is the Marslabor at the University of Basel, a reddish, 40-square-meter chamber built to simulate the surface of Mars. And what just happened there might, in a small but meaningful way, change how we explore other planets.

The problem with current Mars rovers is not really a technology problem. Curiosity and Perseverance are extraordinary machines, capable of drilling into rock, analysing chemistry, and navigating hazardous terrain with impressive autonomy. But they are slow in a specific and frustrating sense: every scientific decision goes through Earth. When a rover spots an interesting rock, scientists analyze the images, debate the options, write commands, and uplink them, all squeezed within narrow communication windows separated by planetary rotation. One-way signal delays to Mars range from four to twenty-two minutes depending on orbital geometry. The result is that rovers typically investigate one target per Martian day, traveling only a few hundred meters before stopping to wait for the next round of instructions. You can have a very capable robot and still waste most of it.

The team at Basel, working with ETH Zurich’s Robotic Systems Lab, wanted to know if there was a better way. Specifically: could a robot select multiple targets in advance, carry out all the measurements autonomously, and still produce scientifically useful data?

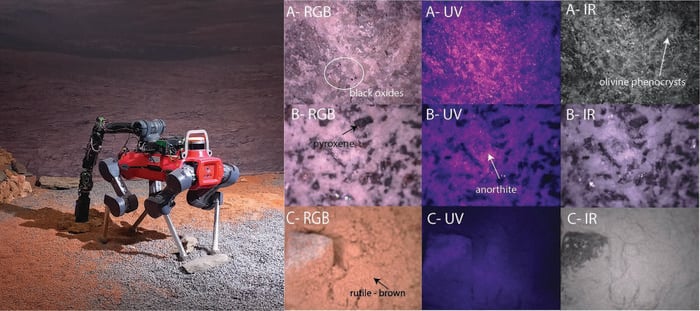

Their robot was ANYmal D, a roughly 60-kilogram quadruped built by Swiss company ANYbotics, fitted with a custom six-degree-of-freedom arm and two instruments: a microscopic imager they call MICRO, which captures close-up images at wavelengths from UV to near-infrared, and a Raman spectrometer that can identify mineral composition from about 70 centimeters away without touching the rock. In the semi-autonomous mode, an operator examined a panoramic image at the start of each mission, selected three targets by clicking on a terrain map, and then stepped back. The robot did the rest, walking to each location, deploying its arm, collecting images and spectra, and moving on.

Across four identical Mars-analogue missions, the robot completed each run in somewhere between twelve and twenty-three minutes, with an average around sixteen and a half. The single human-supervised mission, used as a lunar analogue comparison, took 41 minutes for the same number of targets. Semi-autonomous worked out roughly two-and-a-half times faster. Whether that speed advantage holds in real terrain is another question; the Marslabor has flat, controlled surfaces rather than boulder fields. But the principle is at least established.

The science results were, perhaps, more instructive than the timing. In one of the four Mars runs, the robot correctly identified all three targets: gypsum (a candidate for preserving biosignatures on Mars, because it can encapsulate organic material), a sulphur-bearing basalt, and a carbonate rock partly buried under regolith dust. In the other three runs, identification success was two out of three. The failure mode was consistent: the robotic arm missed Target B, the carbonate group, in missions 1, 2, and 4. The arm’s targeting system was originally designed for close-range, human-guided operation, and selecting targets from panoramic images taken from across the room introduced enough positional error that the instrument occasionally landed on dust rather than rock. The Raman spectrum would come back as noise, and the target remained unidentified.

That limitation matters, but so does what happened when things went partially wrong. On several occasions, either the microscopic image was blurred (caused by the arm vibrating slightly during deployment) or the Raman spectrum was noisy. In almost every such case, the other instrument compensated. A blurred MICRO image with a clear sulphur peak at 473 wavenumbers still counts as a successful identification. A decent close-up image of gypsum’s crystalline veins, plus a weak Raman signal, still gets you there. The two instruments turn out to be complementary in a usefully systematic way: MICRO captures texture and morphology, Raman confirms mineralogy. Together they’re more robust than either alone.

The Raman spectrometer did have one hard limit worth noting. Its spectral range, 400 to 2300 inverse centimeters, cannot reach the water ice signature, which sits at 3000 to 3500. For lunar south pole missions, where detecting water ice is rather the point, this particular instrument won’t do. Future versions would need a broader range, or a different laser wavelength altogether.

There was also, somewhat amusingly, a contamination problem. Residual sulphur powder from an earlier Mars-analogue measurement apparently stuck to the foam ring of the MICRO imager and transferred onto the dunite rock during the lunar session, producing a ghost Raman peak at 473 wavenumbers that looked like sulphur but was an artefact. The researchers caught it by comparison with ground-truth data taken beforehand. On an actual mission, without that ground truth, it could have caused confusion. Small reminder that real planetary science is messier than the diagrams suggest.

The broader argument here is not just about speed. It is about how we use the communication windows we have. Right now, rover operations are essentially single-threaded: one target, one decision cycle, one uplink. What the Basel team is testing is a different model, one where the robot pre-executes an entire campaign while humans review the data on the other end and decide what to do next. As missions push farther out, to Titan (where one-way signal delays exceed an hour), or to the icy moons of Jupiter and Saturn, that model stops being optional and becomes required. ANYmal is a relatively modest demonstration, but the operational concept it is testing scales up in a way that the current step-by-step approach simply does not.

The next steps, the researchers suggest, include visual servoing to close the arm-positioning feedback loop in real time, onboard blur detection to trigger automatic re-measurement, and eventually, autonomous target selection based on shape, colour, and albedo without human pre-selection at all. At that point, the operator would receive not a single rock’s data but a ranked shortlist of candidates from across a large traverse, ready for the next decision cycle. That is, roughly speaking, how field geology actually works.

DOI / Source: https://doi.org/10.3389/frspt.2026.1741757

Could a legged robot really navigate rough terrain on Mars or the Moon?

Wheeled rovers struggle with steep crater walls, boulder fields, and the loose volcanic deposits where many of the most scientifically interesting resources are found. Legged robots like ANYmal can adjust their footing more flexibly, making them potentially better suited to extreme terrain. The current study was conducted on a flat indoor testbed, so performance on genuine planetary surfaces remains to be demonstrated, but locomotion in granular soil (which causes slippage and sinkage) was tested and the robot’s reinforcement-learning controller handled it.

What makes gypsum and sulphur interesting for the search for life on Mars?

Gypsum can physically trap and preserve organic material over geological timescales, making it a strong candidate for biosignature preservation. Sulphur-bearing minerals like jarosite form in the presence of water, so finding them indicates past aqueous chemistry. Both are on the shortlist of materials that astrobiology missions specifically look for, which is why they were included in the analogue test set.

How does Raman spectroscopy identify minerals from a distance?

A laser illuminates the rock surface and some of the light scatters back at shifted frequencies determined by the chemical bonds in the mineral. Each mineral has a characteristic pattern of frequency shifts, called a Raman spectrum, that acts like a fingerprint. The spectrometer used in this study could work from about 70 centimeters away without contact, which is useful when you need a robotic arm to avoid disturbing or contaminating the sample.

Why not just use wheels instead of legs?

For flat, relatively open terrain, wheels are efficient and proven. But permanently shadowed lunar craters, hydrothermal outcrops on cliff faces, and rubble-strewn canyon walls on Mars are exactly the environments that interest geologists most, and they are hard or impossible to reach on wheels. Legs allow the robot to pick its way through obstacles rather than going around them. The tradeoff is complexity and energy consumption, but as battery and motor technology improves, legged platforms are becoming increasingly viable for planetary use.

Discover more from European Space Agency Tracker

Subscribe to get the latest posts sent to your email.