Modern telescopes are magnificent gossips, generating millions of alerts every night about potential changes in the cosmos. The problem? Most of these whispers are lies – satellite trails, cosmic ray hits, instrumental hiccups masquerading as genuine discoveries. For years, astronomers have deployed specialized neural networks to separate wheat from chaff, but these systems operate as inscrutable black boxes, offering verdicts without explanations.

Now, researchers from the University of Oxford and Google Cloud have demonstrated something unexpected: a general-purpose AI can learn to identify real cosmic events – exploding stars, black holes devouring passing objects, asteroids zipping through space – using just 15 example images and plain English instructions. The system achieved roughly 93% accuracy across three different sky surveys, and crucially, it explains its reasoning in language any astronomer can understand.

When Less Training Means More Transparency

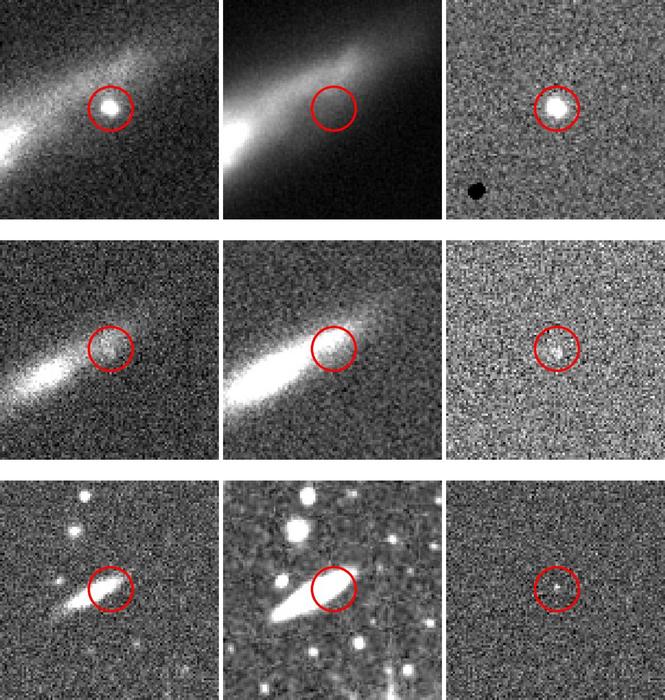

The team fed Google’s Gemini language model a mere 15 annotated image sets from each of three major surveys: Pan-STARRS, MeerLICHT, and ATLAS. Each set included a new observation, a reference image of the same sky patch, and a difference image highlighting changes. Rather than requiring hundreds of thousands of training examples like traditional machine learning approaches, the AI grasped the task through what researchers call “few-shot learning.”

“It’s striking that a handful of examples and clear text instructions can deliver such accuracy. This makes it possible for a broad range of scientists to develop their own classifiers without deep expertise in training neural networks – only the will to create one.”

That assessment comes from Dr. Fiorenzo Stoppa, co-lead author from Oxford’s Department of Physics. The implications extend beyond astronomy. If a general-purpose AI can master transient classification with minimal guidance, what other scientific domains might benefit from this approach?

The research, published today in Nature Astronomy, arrives at a particularly opportune moment. The Vera C. Rubin Observatory will soon begin operations, generating around 20 terabytes of data every 24 hours. Traditional verification methods – astronomers manually reviewing thousands of candidates – will become physically impossible at that scale.

The AI That Knows When It’s Confused

Perhaps the most intriguing aspect of the work involves what happens when the AI doubts itself. The researchers discovered that Gemini could review its own classifications and assign “coherence scores” to its explanations. Low-coherence outputs proved much more likely to be incorrect – the system essentially flagging its own mistakes.

To verify this self-awareness, the team assembled a panel of 12 professional astronomers who reviewed 200 randomly selected classifications through the Zooniverse platform. The astronomers rated the AI’s explanations on a scale from 0 (complete hallucination) to 5 (perfectly coherent). The mean score exceeded 4, with most descriptions clustering around the highest ratings.

The researchers then used this self-assessment capability to improve performance. By identifying low-coherence cases, adding a small number of these challenging examples to the training set, and rerunning the analysis, they boosted accuracy on the MeerLICHT dataset from 93.4% to 96.7%.

“We are entering an era where scientific discovery is accelerated not by black-box algorithms, but by transparent AI partners. This work shows a path towards systems that learn with us, explain their reasoning, and empower researchers in any field to focus on what matters most: asking the next great question.”

That optimistic vision comes from Turan Bulmus, co-lead author from Google Cloud, who notably lacks formal astronomy training – a detail that underscores the democratizing potential of the approach.

Still, practical challenges loom. Traditional neural networks can process individual images in milliseconds, while large language models typically require several seconds per query. Running an LLM through a commercial API for the Rubin Observatory’s expected 10 million nightly alerts would be prohibitively expensive – potentially thousands of dollars per night. The researchers suggest hybrid approaches, where fast neural networks perform initial screening and the LLM provides detailed interpretation only for interesting or ambiguous cases.

Co-author Professor Stephen Smartt, who has spent over a decade developing machine learning models for astronomical image recognition, expressed surprise at the results. The team envisions these systems evolving into autonomous “agentic assistants” that could integrate multiple data sources, check their own confidence levels, request follow-up observations from robotic telescopes, and escalate only the most promising discoveries to human scientists.

The method’s adaptability may prove as important as its accuracy. Because it requires only a small set of examples and plain-language instructions, it can be rapidly reconfigured for new instruments, surveys, and research goals – not just in astronomy, but across scientific disciplines where distinguishing signal from noise remains a fundamental challenge.

Nature Astronomy: 10.1038/s41550-025-02670-z

Discover more from NeuroEdge

Subscribe to get the latest posts sent to your email.