Artificial intelligence can diagnose disease, write essays, and generate art. But it often refuses to admit when it’s wrong. Now, a University of Arizona astronomer has found a way to change that.

In a preprint posted to arXiv, Peter Behroozi introduces a new method for reducing hallucinations in large-scale AI models by making them aware of their own uncertainty. Drawing on computer graphics techniques used in Pixar-style ray tracing and grounded in Bayesian mathematics, the approach allows neural networks with trillions of parameters to flag when their predictions might be unreliable, without requiring massive computational power. The research was supported by the National Science Foundation and includes public code for immediate reuse.

“Wrong but Confident” Is an AI Epidemic

“There are many examples of neural networks ‘hallucinating,’ or making up nonexistent facts, research papers and books to back up their incorrect conclusions,” Behroozi said. “This leads to real human suffering, including incorrect medical diagnoses, declined rental applications and facial recognition gone wrong.”

Trained as a cosmologist, Behroozi built the Universe Machine to simulate galaxy formation by testing billions of model variants. But as his models grew more complex, standard tools for quantifying uncertainty broke down. That’s when a student’s physics question about how light bends through Earth’s atmosphere sparked a breakthrough.

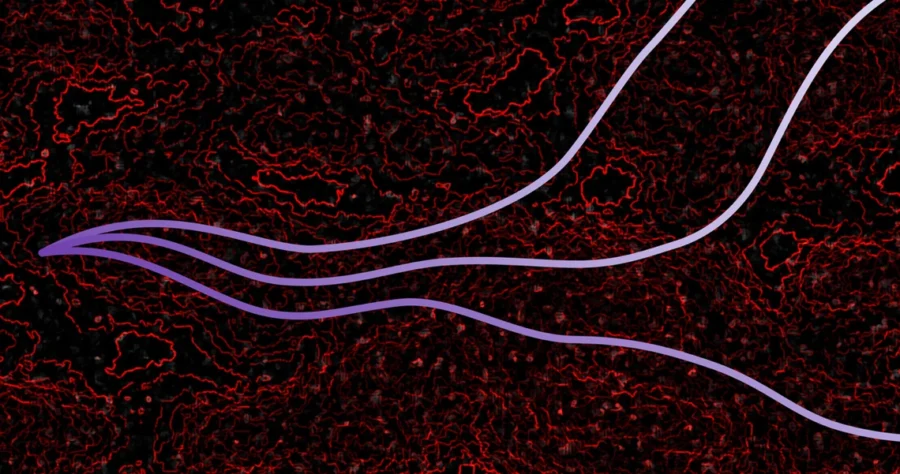

Behroozi realized he could adapt ray tracing—a graphics method that calculates light’s paths through 3D scenes—for use in the billion-dimensional mathematical spaces that govern AI training. The resulting technique approximates the gold-standard Bayesian sampling method, which typically requires evaluating thousands of model variants to capture the full range of possible outcomes. His adaptation, described in detail across Figures 1–4 of the preprint, makes this feasible for even the largest language and vision models.

Instead of trusting a single prediction, the method gathers results from a representative set of models. If their answers diverge, that signals a high-uncertainty region, essentially a warning light that the AI may not know what it’s talking about. On standard benchmarks like CIFAR-10, ImageNet, and GLUE, the technique showed stronger calibration and better performance under distribution shifts than baseline models, all while remaining scalable.

Uncertainty Can Be a Feature, Not a Flaw

“Suppose a doctor ordered a routine scan and decided that you needed to begin treatment for cancer immediately, even though you had no other symptoms,” Behroozi said. “Many people in this situation would seek a second opinion. The new method would have a similar effect: instead of the opinion of one AI doctor, it would give the range of plausible opinions.”

Trust in AI has faltered, in part because many systems deliver outputs with false precision. For scientists, the implications are stark. AI tools are now used to generate hypotheses, analyze images, even write sections of papers—but they often do so with no sense of uncertainty. As Behroozi writes, this “undermines public trust in scientific output” and forces researchers to waste time on costly validations that a better-calibrated system might avoid.

The broader promise lies in making AI more cautious when it should be. From criminal sentencing algorithms to autonomous vehicles, AI decisions increasingly affect real lives. Behroozi’s method doesn’t fix bias or eliminate errors, but it could give users a critical signal: when not to trust the machine.

And for Behroozi’s own research, it opens the door to more ambitious questions. Rather than generating simulations that vaguely resemble our universe, he now hopes to reconstruct the actual initial conditions that gave rise to the cosmic web. “What this technique allows us to do is figure out what were the initial conditions of the actual universe,” he said.

arXiv: 10.48550/arXiv.2510.25824

Discover more from NeuroEdge

Subscribe to get the latest posts sent to your email.