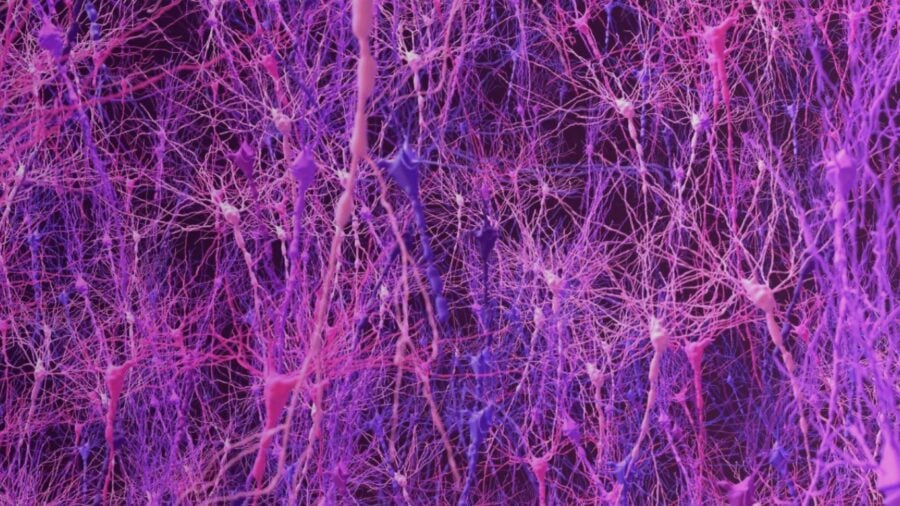

About 20 percent of neurons in a learning brain seem to be doing something counterintuitive. When these cells become more active, mistakes follow. A new computational model of the brain, built to mirror real neural circuits rather than optimize performance, stumbled onto this pattern while learning a simple visual task. Only then did researchers realize the same “incongruent” neurons had been hiding in their animal data all along.

The model learned to categorize dot patterns with the same uneven progress seen in lab animals—breakthroughs, stalls, lurching forward again. It achieved this without ever being trained on animal data. Instead, a team from Dartmouth College, MIT, and Stony Brook University built it from biological principles, simulating how neurons connect through electrical and chemical signals.

When they compared the model’s neural activity to data from animals performing the identical task, the match was striking. Same erratic learning curve. Same synchronized activity in the beta frequency band as correct decisions became more consistent. And that subset of error-predicting neurons, present in both.

“Only then did we go back to the data we already had, sure that this couldn’t be in there because somebody would have said something about it, but it was in there and it just had never been noticed or analyzed,” Richard Granger explains.

Building Noise Into the System

Dartmouth postdoc Anand Pathak designed the model to operate at multiple scales. At the smallest level, tiny circuits of a few neurons each perform fundamental computations. One follows a winner-takes-all architecture found throughout real cortex: excitatory neurons receiving visual input compete by activating inhibitory partners that suppress rivals.

But the model also includes a group of tonically active neurons that inject variability through bursts of acetylcholine, particularly early in learning. This chemical noise loosens the system so it can explore different responses. As learning progressed, circuits in the cortex and striatum strengthened connections that suppressed this variability, allowing behavior to stabilize.

Those incongruent neurons might serve a similar function. A brain that always follows learned rules struggles when conditions shift. Earl K. Miller, Picower Professor at MIT and co-author, points out that trying alternatives occasionally—even if it produces mistakes in stable environments—could help animals detect when the world has changed. Recent work from another Picower Institute lab found evidence that humans and animals do exactly this.

The model encompasses four brain regions: cortex, striatum, brainstem, and those tonically active neurons. Beta-band synchronization between cortex and striatum increased during correct trials, matching a pattern Miller has observed repeatedly in animal studies. When incongruent neurons exerted more influence, the model got the categorization wrong.

What Gets Missed in Real Data

The discovery illustrates something specific about biomimetic modeling. By building a system that behaves like a brain rather than simply performing well, the team generated predictions they could test in biological data. The incongruent neurons were there in the animal recordings. No one had been looking for them.

Granger, a professor of Psychological and Brain Sciences at Dartmouth and senior author of the study in Nature Communications, notes the model’s matches with animal behavior emerged without explicit programming. The research team has since expanded it to include additional brain regions and neuromodulatory chemicals, and they’ve begun testing how interventions like drugs alter its dynamics.

The goal is creating a platform for discovering and refining neurotherapeutics before clinical trials begin. Several team members have founded Neuroblox.ai to develop these models for biotech use, with Stony Brook biomedical engineering professor Lilianne Mujica-Parodi serving as CEO. Drug effects and efficacy testing could happen earlier in development.

For now, the model sent researchers back to their own data with fresh questions. Those error-predicting neurons were invisible until a simulation revealed them. Whether they represent adaptive noise or something else remains open, but the fact that they exist in both digital and biological brains suggests the pattern matters.

Nature Communications: 10.1038/s41467-025-67076-x

Discover more from NeuroEdge

Subscribe to get the latest posts sent to your email.