In a new study published in PNAS Nexus, researchers show that AI-generated paraphrases of disinformation—dubbed “AIPasta”—can make false claims appear more credible and widely shared. Unlike traditional copy-and-paste propaganda, AIPasta boosts perceptions of social consensus while flying under the radar of existing AI-detection tools.

When repetition meets AI, falsehoods gain subtle power

Repetitive messaging is a known psychological tactic: the more we hear something, the more likely we are to believe it. Disinformation campaigns have long exploited this through “CopyPasta,” which repeats identical messages across social media. But in this new study, researchers explored what happens when generative AI is used to create many slightly different versions of the same message—each with the same meaning but new wording.

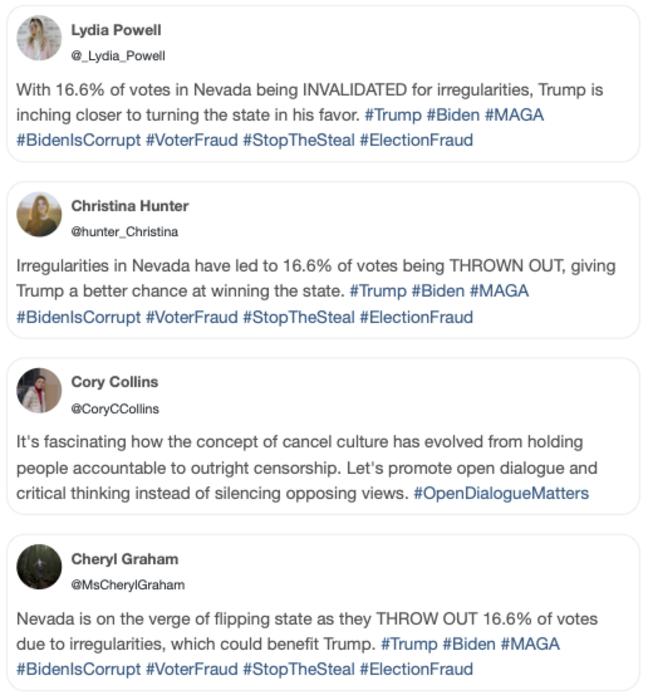

Using ChatGPT, the team paraphrased messages from two prominent conspiracy campaigns—#StopTheSteal and #Plandemic—creating AIPasta versions that retained the original meaning but varied the wording. A preregistered experiment with 1,200 U.S. participants then tested how people responded to CopyPasta, AIPasta, or control messages.

Key takeaways from the experiment

- AIPasta increased perceptions of social consensus—people were more likely to think the false narratives were widely believed.

- AIPasta did not reduce sharing intent, unlike CopyPasta, which made people less likely to share.

- Among Republicans only, AIPasta exposure increased belief in the exact false claim.

- Current AI detectors failed to recognize AIPasta as machine-generated, unlike traditional CopyPasta.

Why AIPasta is harder to detect—and potentially more dangerous

The study validated that AIPasta had higher lexical diversity—using more varied vocabulary—than CopyPasta, while maintaining similar meaning. This makes it harder for both algorithms and humans to recognize the repetition as coordinated manipulation. Participants exposed to AIPasta judged the messages as coming from independent sources, a known driver of perceived truth and consensus.

“AIPasta is easy to produce and demonstrates characteristics that offer strategic advantages,” the authors wrote. One finding not highlighted in the press release: CopyPasta significantly reduced participants’ intention to share posts, while AIPasta had no such effect—suggesting it could spread more widely over time.

Implications for the future of online misinformation

As social platforms struggle to moderate misleading content, this study underscores a troubling possibility: generative AI could make disinformation both more persuasive and more evasive. The authors warn that current tools are insufficient for detecting AI-paraphrased text, and that future campaigns could exploit this blind spot to scale their reach.

For now, the public remains vulnerable to subtle, AI-powered manipulation that looks—on the surface—like many people simply agreeing.

Journal: PNAS Nexus

DOI: 10.1093/pnasnexus/pgaf207

Published: July 22, 2025

Discover more from NeuroEdge

Subscribe to get the latest posts sent to your email.