As deepfake images and videos become ever more convincing, tech companies have turned to invisible watermarks to help identify what’s real. But a new study from researchers at the University of Waterloo shows that even the best digital fingerprints can be erased—silently, universally, and without insider knowledge.

Called UnMarker, the technique is the first practical, universal tool that removes AI image watermarks without needing access to the watermarking algorithm, training data, or detector feedback. It exploits a hidden weakness shared by all robust watermarking systems: their reliance on subtle frequency patterns across an image.

How UnMarker Works

Most current watermarking schemes—including Google’s SynthID and Meta’s Stable Signature—embed their digital signatures in the spectral amplitudes of images. This means the watermark isn’t tied to individual pixels but rather to how patterns of pixel values fluctuate across different regions and scales.

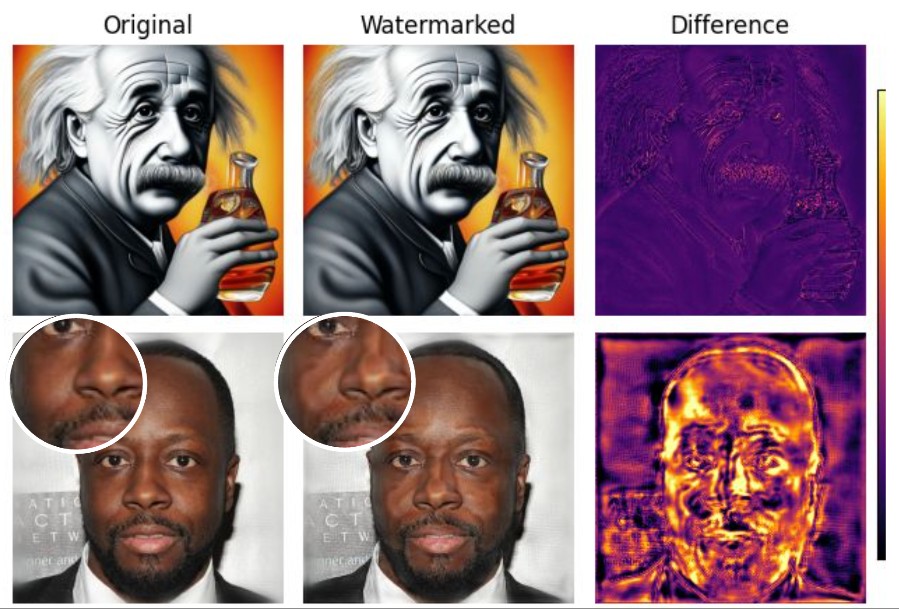

UnMarker targets these embedded frequency signatures by introducing calculated distortions that are invisible to the human eye but fatal to the watermark. It works in two stages:

- High-frequency disruption: Alters sharp image details to remove traditional, non-semantic watermarks.

- Low-frequency filtering: Applies adaptive blurring techniques to dismantle semantic watermarks that subtly alter image content or texture.

Unlike previous attacks, which required re-generating images using diffusion models or surrogate detectors, UnMarker operates entirely offline and needs no training data. In tests across seven state-of-the-art watermarking systems, it consistently reduced detection rates below 50%—the threshold at which a watermark is no longer considered a reliable signal.

“We Can’t Trust What We See Anymore”

“People want a way to verify what’s real and what’s not because the damages will be huge if we can’t,” said Andre Kassis, lead author and PhD candidate at Waterloo. “From political smear campaigns to non-consensual pornography, this technology could have terrible and wide-reaching consequences.”

Co-author Urs Hengartner noted that while the precise workings of watermarking tools are typically secret, their goals limit how they can operate. “To be invisible and robust, watermarks must live in the spectral domain,” he explained. “That constraint makes them vulnerable.”

UnMarker doesn’t just target watermarks—it renders them irrelevant. In testing, even semantic watermarks embedded by manipulating image structure (like Google’s SynthID or TRW) were successfully defeated, often by combining subtle cropping with UnMarker’s spectral attacks.

Implications for Deepfake Defenses

The findings suggest that defensive watermarking may be fundamentally flawed as a long-term strategy against malicious AI-generated content. Even combining existing attacks with image purification tools failed to restore watermark detectability after UnMarker had done its work.

Instead, the authors argue, future solutions must look beyond static image tagging. “Watermarking is being promoted as this perfect solution,” said Kassis, “but we’ve shown that this technology is breakable.”

The study, “UnMarker: A Universal Attack on Defensive Image Watermarking,” appears in the proceedings of the 46th IEEE Symposium on Security and Privacy (IEEE S&P 2025).

Journal: IEEE Symposium on Security and Privacy (IEEE S&P 2025)

DOI: 10.1145/3715669.372312

Discover more from NeuroEdge

Subscribe to get the latest posts sent to your email.