AI Disclosure Labels Reduce Trust in True Science Posts While Boosting False Ones

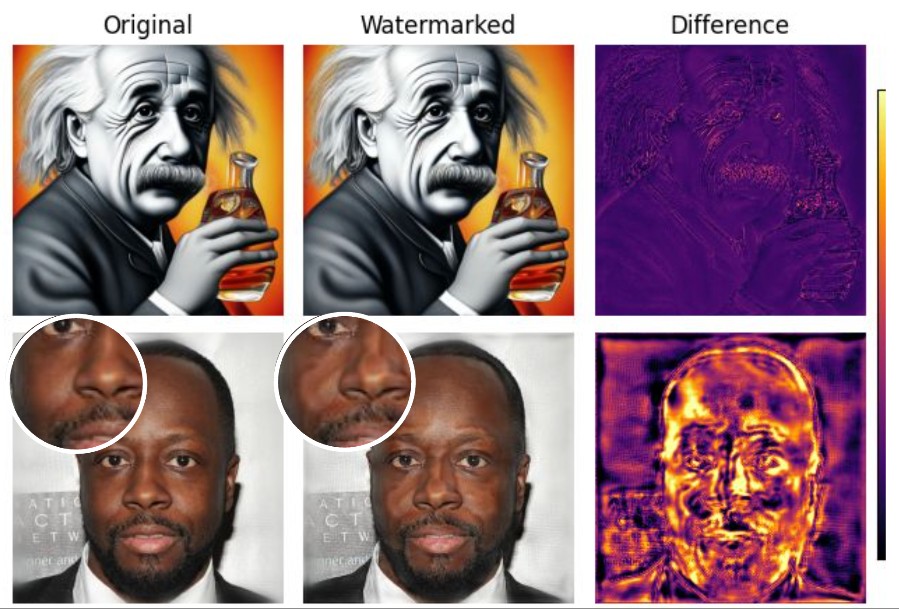

Slapping a label on AI-generated content is the regulatory world’s current favourite answer to the misinformation problem. Transparent, scalable, required by law in China and under the EU AI Act, endorsed by Meta and X. The logic seems obvious enough: tell people a machine wrote something and they’ll scrutinise it harder. They didn’t, as it … Read more