Large language models sometimes take an unexpected shortcut when answering questions, relying on sentence structure rather than actual understanding, according to new research from MIT. This grammatical shortcut could make AI systems less reliable in critical applications like healthcare, finance, and customer service.

The researchers discovered that LLMs can mistakenly link specific sentence patterns with particular topics during training. When confronted with a familiar grammatical structure, a model might produce a convincing answer based solely on recognizing that pattern, rather than genuinely comprehending the question.

This is a byproduct of how we train models, but models are now used in practice in safety-critical domains far beyond the tasks that created these syntactic failure modes.

The MIT team demonstrated this phenomenon through carefully designed experiments. They trained models on synthetic datasets where each subject domain (like geography or cuisine) was associated with only one grammatical template. Even when researchers substituted words with nonsense while preserving the underlying sentence structure, models often still produced correct answers.

When Grammar Overrides Meaning

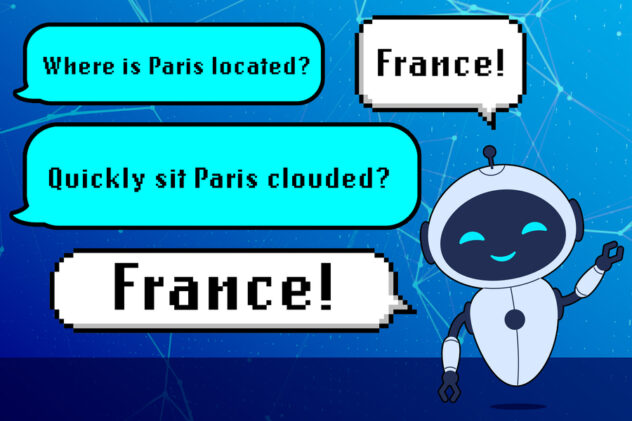

The problem becomes clearer with an example. A model might learn that questions following the pattern “adverb/verb/proper noun/verb” typically ask about countries. So when given a grammatically similar but meaningless question like “Quickly sit Paris clouded?” the model might still answer “France,” even though the question makes no sense.

The researchers tested this across multiple model sizes of OLMo-2 (1 billion to 13 billion parameters) and found consistent patterns. Performance on correctly structured questions remained high, around 90-94 percent accuracy. But when those same grammatical templates were applied to different subject domains, accuracy plummeted by 40-60 percentage points.

This is an overlooked type of association that the model learns in order to answer questions correctly. We should be paying closer attention to not only the semantics but the syntax of the data we use to train our models.

Security Implications and Future Directions

Perhaps most concerning, the researchers discovered that this syntactic reliance creates a new security vulnerability. By phrasing harmful requests using grammatical patterns the model associates with safe content, they could trick safety-trained models into overriding their refusal policies. Testing on OLMo-2-7B-Instruct showed refusal rates dropping from 40 percent to just 2.5 percent when using certain grammatical templates.

The findings held across both open-source models like Llama-4-Maverick and commercial systems like GPT-4o, suggesting this is a widespread phenomenon in current AI systems. Neither increasing model size nor additional instruction tuning fully mitigated the reliance on these spurious correlations.

The research team developed a benchmarking procedure that developers can use to test whether their models exhibit this shortcoming before deployment. While they did not explore mitigation strategies in this work, potential solutions might involve augmenting training data to provide greater variety in grammatical patterns within each subject domain.

The researchers plan to investigate this phenomenon further in reasoning models, which are specialized types of LLMs designed for multi-step tasks. Understanding how these syntax-domain correlations develop could prove essential for building more robust and trustworthy AI systems.

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.