Doctors have long struggled to spot melanoma early, a problem made harder by how often dangerous lesions masquerade as harmless ones.

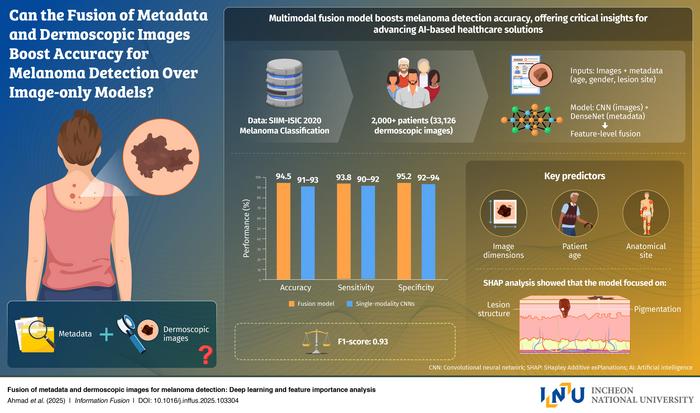

Now a new multimodal deep learning system developed by researchers at Incheon National University and international partners reports 94.5 percent accuracy by pairing dermoscopic images with basic patient details, a combination that may reshape how skin cancer is detected and treated. The work, published in Information Fusion, shows that integrating clinical metadata with visual signals provides unusually strong gains in diagnostic precision.

The study arrives at a moment when clinicians and technologists are racing to build AI systems that can support early detection without adding complexity to already overwhelmed clinical workflows. Most image based melanoma models rely on visual patterns alone, even though patient age, gender, and anatomical site can heavily influence diagnostic likelihood. By training their model on the large SIIM ISIC dataset of more than 33,000 dermoscopic images paired with patient metadata, the researchers set out to test whether a fused representation could reveal patterns invisible to either data stream on its own. Their results suggest that the clinical context matters profoundly.

Model Performance Surpasses Image Only Systems

Using a multilayer convolutional neural network to extract image features and a separate fully connected network to handle metadata, the team fused the two streams at the feature level before classification. The approach allowed the system to learn subtle interactions between lesion appearance and patient specific factors. According to the study, the model not only achieved 94.5 percent accuracy but also delivered an F1 score of 0.94, surpassing widely used image only architectures such as ResNet 50 and EfficientNet. These improvements, the authors argue, highlight how AI can move beyond pixel level reasoning by incorporating information physicians routinely consider in real clinical practice.

“Skin cancer, particularly melanoma, is a disease in which early detection is critically important for determining survival rates,” says Prof. Jeon. “Since melanoma is difficult to diagnose based solely on visual features, I recognized the need for AI convergence technologies that can consider both imaging data and patient information.”

Why Metadata Matters For Trustworthy AI

To assess transparency, the researchers conducted feature importance analysis that revealed strong contributions from lesion size, patient age, and anatomical location, a finding that mirrors decades of clinical insight. Such alignment could foster clinician trust, an ongoing challenge for medical AI developers. The team also emphasized that their model was designed not as a research novelty but as a practical foundation for future tools. With its ability to run on common dermoscopic images and basic patient inputs, the system could support smartphone based screening tools, telemedicine workflows, or rapid triage systems in dermatology clinics.

“The model is not merely designed for academic purposes. It could be used as a practical tool that could transform real world melanoma screening,” Prof. Jeon says. “This research can be directly applied to developing an AI system that analyzes both skin lesion images and basic patient information to enable early detection of melanoma.”

As smart healthcare expands, the promise of multimodal AI may rest less in its complexity than in how closely it mirrors the reasoning clinicians already apply. By showing that fusing simple demographic details with high resolution images can produce substantial diagnostic gains, this study points to a future in which earlier, more accurate melanoma detection becomes widely accessible. For a cancer where timing defines survival, such progress carries clear and tangible stakes.

Information Fusion: 10.1016/j.inffus.2025.103304

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.