Data loss is frustrating on a laptop. On a quantum computer, it has always been considered physics-enforced and permanent. Researchers at the University of Waterloo have now developed a method that finally allows quantum information to be backed up without violating the fundamental laws that govern the quantum world.

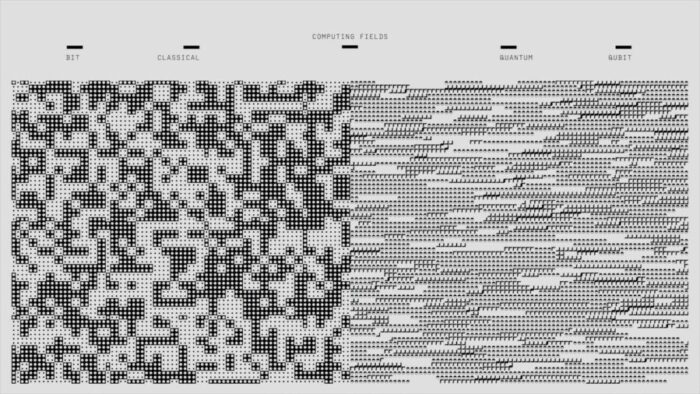

The advance, published in Physical Review Letters, centers on a decades-old problem: the no-cloning theorem. This rule states that you cannot make a perfect copy of an unknown quantum state. Any attempt to duplicate a qubit inevitably destroys the original. Classical computers sidestep this easily, copying files millions of times per second. Quantum systems have had no such luxury, forcing engineers to accept that if a qubit fails, the information vanishes.

Physicists Achim Kempf and Koji Yamaguchi approached the constraint from an unexpected angle. Rather than trying to copy quantum information directly, they asked whether it could be distributed in encrypted fragments that only become meaningful when reassembled. The answer turned out to be yes, but with a critical limitation: the decryption process consumes itself.

Scrambled copies that work exactly once

The technique creates what the researchers call encrypted clones. Each clone contains a complete imprint of the original quantum state, but in a maximally scrambled form. To anyone examining them, these copies look like meaningless noise. The actual information is locked.

The key lives in a separate set of auxiliary qubits that remain entangled with the encrypted copies. When a user needs to recover the data, they combine one encrypted copy with this key. The original quantum state emerges perfectly intact. However, the key is destroyed in the process and cannot unlock another copy.

This one-time-use feature is what allows the method to respect the no-cloning theorem. At no point does usable quantum information exist in multiple places simultaneously. The copies are genuine duplicates, but they remain inaccessible until the moment one is decrypted, which renders the others permanently unusable.

“It turns out that if we encrypt the quantum information as we copy it, we can make as many copies as we like. This method is able to bypass the no-cloning theorem because after one picks and decrypts one of the encrypted copies, the decryption key automatically expires, that is the decryption key is a one-time-use key.” – Dr. Koji Yamaguchi, Research Assistant Professor

Kempf and Yamaguchi demonstrated that their protocol scales efficiently. Creating ten encrypted copies requires only marginally more quantum gate operations than creating two, making it practical for large-scale quantum infrastructure. The encrypted copies can be stored on different servers, even in separate geographic locations. If one server fails, the owner still holds the master key that can unlock any surviving copy.

Infrastructure that was previously impossible

The implications extend beyond simple backups. Quantum networks could distribute encrypted copies across noisy channels, knowing that only one needs to arrive intact for full recovery. Quantum radar systems might use the technique to send signal photons into the field while keeping noise qubits at home, helping identify returning signals with greater precision.

The method also suggests a path toward distributed quantum clouds. In classical computing, redundancy is trivial. Quantum systems have operated under the assumption that fragility is unavoidable. This work shows that assumption was incomplete. Quantum information does not have to live on a single thread. With the right kind of encryption, it can finally have a safety net.

For now, the work remains theoretical. Building hardware capable of implementing encrypted cloning at scale will require significant advances in quantum control and error correction. But the result changes what is considered possible. Quantum information is still delicate, but it no longer has to be irreplaceable.

Physical Review Letters: 10.1103/PhysRevLett.134.010201

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.