Your brain does something remarkable when you drive through an intersection with faded lane markings. You fill in the gaps. The paint might be worn down to nothing, but you know where the lanes should run. Construction cones block half the view, but you can still picture the road underneath.

Autonomous vehicles can’t do this. When their cameras lose track of a lane line, the software often produces jagged, nonsensical predictions. Sometimes it just gives up entirely. Researchers at Tsinghua University think they’ve found a fix: teach the AI what roads are supposed to look like in the first place.

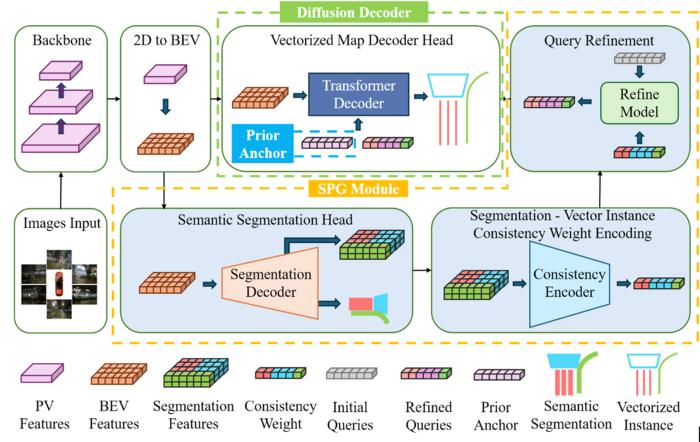

Their framework, called PriorFusion, uses learned expectations about road geometry to keep predictions stable even when the visual data gets messy. The work appears in Communications in Transportation Research.

Why Expectations Matter More Than You’d Think

Most modern self-driving systems convert camera images into overhead maps showing lanes, boundaries, and crossings. This works great on fresh highway paint. It falls apart in cities.

The problem isn’t lack of training data. Roads follow patterns. Lane dividers run long and smooth. Crosswalks show up as parallel stripes. Curbs bend gradually, not sharply. When AI ignores these basic rules, predictions drift all over the place.

PriorFusion tackles this by building what amounts to a geometry library. The team analyzed tens of thousands of real road markings to create shape templates. Smooth curves for boundaries. Straight parallel lines for crossings. Long, continuous paths for dividers.

These templates act as starting points. Instead of guessing blindly when the camera can’t see part of a lane, the system begins with a shape that looks like an actual road. It’s the difference between sketching freehand in the dark versus tracing over a faint outline.

“We design PriorFusion to introduce shape priors into every stage of the perception pipeline, ensuring that road elements remain stable, smooth, and structurally consistent, even under challenging conditions,” Xuewei Tang explains.

Semantic segmentation keeps the predictions anchored to what the cameras actually see. No drifting off into fantasy land.

Making It Fast Enough to Matter

Here’s where things get tricky. The team added a generative component that can fill in missing road sections when something blocks the view. Think of it like AI image generation, but for road geometry.

Traditional approaches of this type require dozens of processing steps. Too slow for real-time driving. PriorFusion uses just two iterations of what’s called truncated diffusion. Fast enough to run on standard vehicle hardware.

The team tested this on nuScenes, a dataset packed with messy urban driving scenarios. Intersections, construction, occlusions. PriorFusion hit 70.4 percent mean average precision. Under stricter evaluation criteria focused on shape accuracy, it beat previous methods by more than seven percent.

The gains showed up exactly where you’d expect. Intersections where other systems fragment or lose track entirely. Merges with partial occlusion. Places where the road stops making obvious visual sense but still follows geometric rules.

And the computational cost? Minimal. Two extra decoder iterations adds almost no latency.

The framework plugs into existing perception systems without major surgery. This matters as autonomous driving moves away from relying on pre-installed high-definition maps of every city. You can’t map construction zones that appear overnight. You can’t pre-load every faded crosswalk.

But you can teach a system that crosswalks usually look a certain way. That lanes tend to run parallel. That boundaries curve smoothly rather than zigzagging randomly.

Picture an autonomous vehicle approaching an intersection where delivery trucks block half the view and morning shadows obscure the crosswalk. Instead of producing fragmented, unreliable predictions, it generates smooth, structurally sound geometry based on learned experience. The difference between guessing and knowing what roads are supposed to look like.

Communications in Transportation Research: 10.1016/j.commtr.2025.100229

Discover more from SciChi

Subscribe to get the latest posts sent to your email.