The Chinese suanpan has been around for roughly 1,000 years. Slide a bead up, slide a bead down — each rod operates independently, and the number it represents depends only on where the beads sit. Break one rod and the rest carry on just fine. It is, in a sense, the world’s most fault-tolerant calculator.

Now a team at Tsinghua University in Beijing has borrowed that logic for a photonic computing chip, and the result could help solve one of the gnarliest bottlenecks facing AI hardware. Their architecture, also called SUANPAN, replaces the beads with pairs of tiny lasers and light detectors, each one working on its own little piece of a calculation without needing to know what any of its neighbours are doing.

The problem they’re tackling is scalability — or the lack of it. Most photonic computing designs rely on beams of light interacting with one another, bouncing through beam splitters, diffraction gratings, and arrays of liquid crystal cells to perform the matrix multiplications that underpin modern AI. That approach works, but there’s a catch: because the light beams are coupled together, adding more components doesn’t simply give you more computing power. Errors compound. Losses pile up. You hit a ceiling pretty quickly.

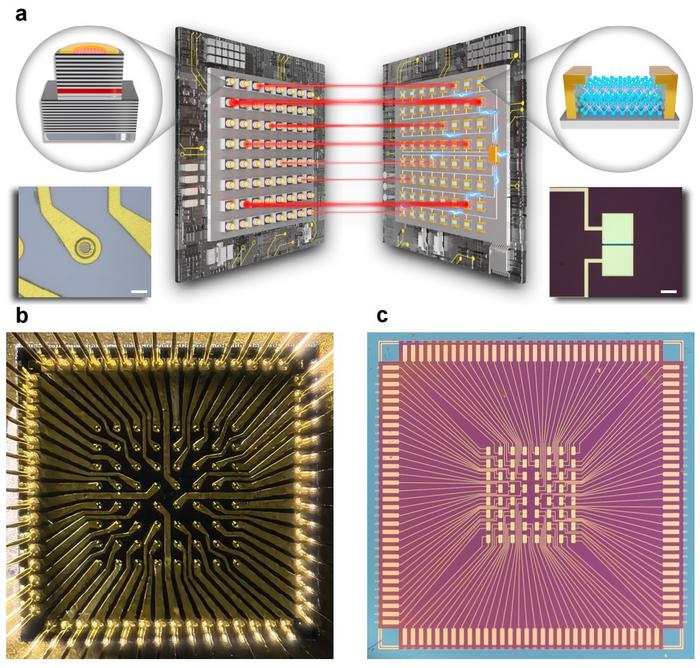

Yidong Huang and colleagues at Tsinghua, working with collaborators at Peking University and Berxel Photonics, took a different tack. Instead of forcing light beams to interact, they kept them apart entirely. Each emitter-detector pair — they call these BEADs, short for Bit Encoding and Analog Detecting — handles the multiplication of two numbers on its own. One number gets encoded as the brightness of a laser; the other is represented by whether the detector is switched on or off. The photocurrent that comes out is proportional to the product of the two. Wire all the detectors together and, thanks to Kirchhoff’s current law, you get the sum of all those products. That’s a vector inner product — the bread-and-butter operation behind neural networks and optimisation algorithms.

What makes this clever, perhaps even a bit elegant, is the encoding scheme. Conventional photonic systems need banks of digital-to-analogue converters to translate electronic data into light signals, and those converters are power-hungry and bulky. The SUANPAN sidesteps the issue by encoding digital information across multiple BEADs in binary, the same way an abacus uses bead positions. Each BEAD only ever needs to be on or off — 1-bit information — so no converter is required on the input side. On the output, a single analogue-to-digital converter reads the total photocurrent. It’s a tidy trick.

For their proof-of-concept, the researchers built a chip with a grid of 64 vertical-cavity surface-emitting lasers (VCSELs) paired with 64 photodetectors made from MoTe₂, a two-dimensional material just a few atoms thick. The VCSELs emit at 850 nanometres; the MoTe₂ detectors can have their sensitivity tuned by adjusting the bias voltage, which is how the team sets different binary weights for different bits.

The results were solid. Random vector inner products came back with fidelities above 98 percent across 2-bit, 4-bit, and 8-bit precisions, and stayed above 95% as the dimensionality increased. The team then reconfigured the same hardware — no physical changes, just reprogramming which BEADs were on or off — to tackle two standard AI benchmarks. A randomly generated 1024-dimensional Ising optimisation problem was successfully solved, which is apparently the highest dimensionality any optical Ising machine using a heuristic algorithm has managed. And a neural network running on the SUANPAN recognised handwritten digits from the MNIST dataset with 88 percent accuracy, close to the 90 percent the same network achieved running on a conventional computer.

Neither figure will knock your socks off on its own. But the point isn’t raw performance — it is that the architecture did all of this with independent units that could, in principle, be scaled up by just stamping out more laser-detector pairs. The team reckon that with emitters and detectors smaller than 10 micrometres and bandwidths above 1 gigahertz — both achievable with existing technology — a single chip could reach computing speeds above 1 peta-operation per second for 1-bit quantisation. For context, that’s roughly in the territory where optical computing starts to look competitive with electronic accelerators for certain workloads.

There are caveats, of course. The current prototype is modest: 64 BEADs, with laser and detector arrays sitting about 1.5 metres apart, connected by a zoom lens. Integrating the emitters and detectors onto the same chip would slash propagation delays and boost efficiency enormously — at the moment, most of the light from each laser misses the detector channel entirely, because the spot size is around 200 micrometres wide while the detector channel is only about 10 micrometres across. That’s a lot of wasted photons. The MoTe₂ detectors also degraded over three months of testing, which knocked the accuracy of a two-layer neural network down to 84 percent.

Still, the underlying idea has a certain stubbornness to it that makes it hard to dismiss. Because the BEADs don’t talk to each other optically, a dead unit doesn’t poison the rest; you just lose one dimension. No phase correction is needed, either, which removes one of the fiddliest engineering headaches in photonic computing. And the whole thing can be reconfigured in software for different tasks — Ising problems one moment, neural networks the next — without touching the hardware.

We are probably still some years from seeing photonic processors like this inside data centres. But if AI’s appetite for matrix maths keeps growing at its current pace, and there is no sign of it slowing, then an architecture that scales the way transistors once did — by simply making more copies of the same unit — might be exactly what the field needs. The abacus, it turns out, had the right idea all along.

Study link: https://www.nature.com/articles/s41377-025-02059-7

Discover more from SciChi

Subscribe to get the latest posts sent to your email.