She was sure the researchers were cheating. One of the volunteers in Nima Mesgarani’s lab at Columbia University had electrodes implanted in her brain, two voices playing simultaneously through a speaker, and the creeping conviction that somewhere in the room someone was quietly turning a dial. The voices kept shifting in and out. The one she wanted to follow would swell, the other recede. It felt, she told the team, like someone was reading her mind. Which, in a way, they were.

The experiment Mesgarani and his colleagues have been building toward for more than a decade is deceptively simple in concept. You’re at a party. Someone is talking to you and three other conversations are happening around you; somehow your brain locks onto the one you care about and filters the rest into background noise. This is the cocktail party effect, and we all do it unconsciously, constantly, and with a fluency that current hearing technology cannot come close to matching. Modern hearing aids amplify everything indiscriminately. They can suppress certain kinds of background noise, traffic for instance, but faced with a roomful of competing voices they just turn them all up equally. The result for people with hearing loss is often a wall of undifferentiated sound.

The approach Mesgarani’s team has been developing since around 2012 starts not with microphones but with neurons. Back then, his lab made a key discovery: the auditory cortex produces a distinct neural signature for whichever speaker a person is paying attention to. The timing of peaks and valleys in brainwave activity mirrors the rhythms of attended speech in a way that unattended speech doesn’t. That finding raised an obvious question. If you could read that signature in real time, could you use it to automatically adjust what a person hears?

Brainwaves in the driving seat

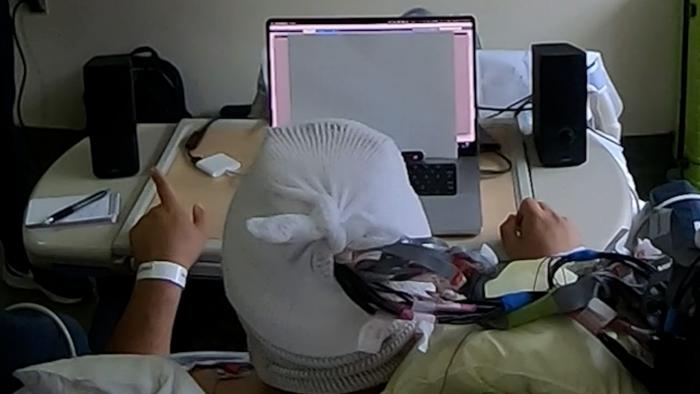

The new study, published in Nature Neuroscience, provides the clearest answer yet. Mesgarani’s team worked with four patients who were already undergoing clinical monitoring for epilepsy, meaning they already had intracranial electrodes placed directly on or within their brain tissue. High-resolution recordings from those electrodes gave the researchers an unusually clean neural signal to work with. The volunteers listened to pairs of overlapping conversations, each spatially separated by about 15 degrees, with pedestrian noise or background babble mixed in. A machine-learning algorithm trained on each participant’s brain data learned to reconstruct the speech envelope of whatever they were attending to, then compare it against the two audio streams to figure out which conversation they were following. When the system activated, it gradually turned up the attended conversation by up to 9 decibels and quieted the other. Gradually, to avoid jarring transitions; the researchers found the experience worked best when the shifts felt seamless.

Across 80 trials, decoding accuracy ranged from around 72 to 90 percent, well above the 50 percent chance level, and the system worked whether participants were directed to a particular voice by a visual cue or simply let their attention wander naturally. It tracked deliberate attention switches (mean response time: just over five seconds) and self-initiated ones. Volunteers preferred the system-on condition in 75 to 95 percent of trials. Pupil dilation, a physiological proxy for cognitive effort, shrank significantly when the system was active. “For this to work in real time, the system has to be very fast, accurate and stable for the experience to feel pleasant for the listener,” Mesgarani said. One volunteer recalled her uncle, who had hearing difficulties. “Can you imagine if this technology existed in a world [where] … he could access it? He might actually live a much more peaceful … life.”

The team also ran a separate validation with 40 people who do have hearing loss. These participants listened to audio that had already been processed by the real-time brain-decoding system, modulated according to the neural signals of the intracranial participants. People with more severe impairment showed the largest intelligibility gains, which makes a certain intuitive sense: the harder the listening task, the more the boost matters. “We have developed a system that acts as a neural extension of the user,” Mesgarani said, “leveraging the brain’s natural ability to filter through all the sounds in a complex environment to dynamically isolate the specific conversation they wish to hear.”

From theory to proof

The phrase “proof of concept” tends to get overused, but there’s a reason Vishal Choudhari, the paper’s first author and the engineer who led system development, keeps reaching for it. Hundreds of studies over the past decade have inched toward the same goal, improving decoder algorithms, testing different electrode configurations, trying to separate voices from mixed audio. None had demonstrated that all the components could work together in real time to actually help a person hear better. “The central unanswered question,” Choudhari said, “was whether brain-controlled hearing technology could move beyond incremental advances, towards a prototype that could help someone hear better in real time.” What the Columbia team has now done is clear that bar, at least under controlled conditions. Their decoder operated on high-quality intracranial signals, which are not the kind of recordings you can get without neurosurgery. They’re clear about this. The intracranial approach was chosen deliberately to establish what’s possible when you have the richest possible neural signal, not as a blueprint for a device you’d sell in a pharmacy.

Still, the gap between this benchmark and something wearable has been narrowing. Recent advances in speech separation algorithms mean that real-time systems can now operate on mixed audio rather than pre-separated streams; the researchers ran a control analysis showing their decoding performance was nearly identical whether they used clean audio sources or algorithmically separated ones. The neurotechnology landscape is also changing: implantable devices are becoming more routine for epilepsy, depression, movement disorders. More than 430 million people worldwide live with disabling hearing loss, according to WHO estimates, and untreated hearing loss is one of the leading modifiable risk factors for dementia. There’s a clinical argument for a minimally invasive implant that doesn’t amplify everything equally.

There’s also a subtler argument for going via the brain at all rather than using, say, eye-tracking or head orientation as a proxy for attention. Those approaches work when you’re looking directly at whoever you want to hear. They fail when talkers are co-located, when you’re listening to someone behind a door, when you’re covertly eavesdropping on the next table. The brain, by contrast, carries information not just about where you’re looking but about what’s semantically relevant to you, what you’re trying to follow, what you’ve decided to filter. It knows things that your eyes don’t reveal.

One thing the Columbia volunteers found, in post-experiment debriefings, was that the system felt natural. Not assistive in the mechanical sense. They could let their attention wander between speakers and the system would follow, the audio landscape shifting around them. It seemed like science fiction, one of them said. Perhaps. Or perhaps it’s closer to what human hearing has always been doing, just made visible enough to copy.

Frequently Asked Questions

How does the brain-controlled hearing system actually work?

The system uses electrodes that record electrical activity directly from the brain’s auditory cortex. A machine-learning algorithm learns to reconstruct the rhythmic pattern of whichever speech stream a person is attending to, then compares that pattern against the actual audio streams being played. Whichever conversation best matches the brain’s reconstructed signal gets turned up; the other is attenuated. The whole process runs continuously, updating every half second or so.

Would this require brain surgery to use?

The current study used intracranial electrodes placed directly on or within brain tissue, which does require surgery. The researchers chose this approach deliberately, to establish a performance benchmark using the highest-quality neural signals available. They acknowledge that practical devices will need to work with less invasive recordings, and the neurotechnology field is moving toward smaller implantable systems. Non-invasive EEG-based versions have been explored but tend to achieve lower decoding accuracy.

Why can’t hearing aids already do this by separating voices from background noise?

Conventional hearing aids can suppress certain types of background noise, such as traffic or steady hums, but they cannot infer which of several competing voices the listener actually wants to follow. Faced with multiple simultaneous speakers, they amplify all of them. The brain-controlled approach bypasses this limitation because it reads the listener’s intent directly, rather than trying to infer it from acoustic properties of the sound alone.

How quickly does the system respond when you shift attention to a different speaker?

In the Columbia experiments, the average switch time was just over five seconds, meaning that was how long it took for the audio balance to flip toward a newly attended speaker. The researchers note this isn’t a neurological limit; it reflects deliberate design choices, including a four-second decoding window and a smoothing algorithm that prevents abrupt volume jumps. Faster response times are technically possible but come at the cost of stability.

Who would benefit most from this kind of technology?

The supplementary validation study found that people with more severe hearing loss showed the largest gains in speech intelligibility, which makes sense: the harder the listening task, the more meaningful a boost in the attended signal becomes. But the researchers also suggest it could reduce listening fatigue for anyone in challenging acoustic environments, including classrooms, restaurants, and workplaces, whether or not they have diagnosed hearing loss.

https://doi.org/10.1038/s41593-026-02281-5

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.