A fertilized egg becomes a complex organism through thousands of cells folding, dividing, and sliding past each other in precise sequence. For decades, biologists could watch this happen but couldn’t forecast what any individual cell would do next. That changed when MIT engineers trained a deep-learning model on rare, high-resolution videos of fruit fly embryos developing in real time.

The system, called MultiCell, predicts cell behavior during gastrulation, the first chaotic hour when a smooth embryo rapidly reorganizes into defined structures. In tests published December 15 in Nature Methods, the model forecast the movements of roughly 5,000 cells with 90 percent accuracy, tracking which cells would fold inward, which would split, and when neighbors would rearrange.

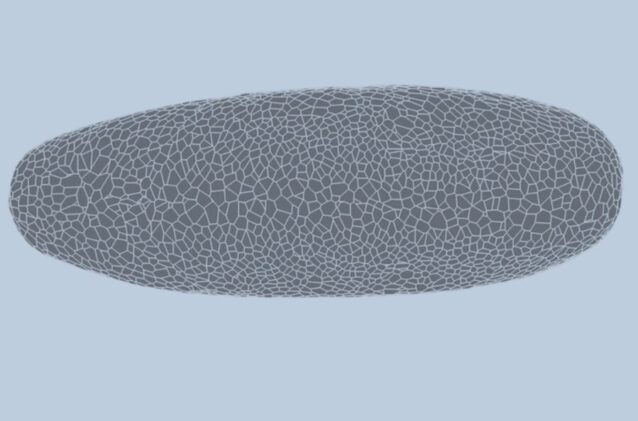

What makes the approach work is how it represents tissue. Most models force a choice: treat cells either as isolated points drifting through space, or as foam-like bubbles pressed against each other. Both capture something real, but each omits crucial information about how cells actually interact.

“There’s a debate about whether to model as a point cloud or a foam. But both of them are essentially different ways of modeling the same underlying graph, which is an elegant way to represent living tissues,” Haiqian Yang, an MIT graduate student and co-author, explains.

The MIT team’s dual-graph structure tracks cells as both points and connected boundaries simultaneously. This lets the model learn geometric properties that matter for prediction: whether a cell currently touches a neighbor, how junction lengths change, whether a fold is forming. During gastrulation, the embryo surface goes from entirely smooth to creased at multiple angles, creating a shifting landscape where local geometry determines what happens next.

Predicting Not Just If, But When

Training required extraordinarily detailed data—three videos capturing entire embryos in three dimensions, with individual cell boundaries and nuclei labeled at single-cell resolution over a full hour. After learning from those datasets, the system successfully predicted behavior in a fourth, unseen embryo.

The model doesn’t just forecast whether a cell will change. It pinpoints timing: this cell will detach from its neighbor in seven minutes, not eight. That temporal precision matters because it reveals the mechanical forces driving development. Knowing when a fold begins tells you something about the pressures building in surrounding tissue.

Associate professor Ming Guo, who led the work, notes the method could extend to other species like zebrafish and mice. More significantly, it might expose how disease-prone tissues diverge from healthy development before symptoms appear. Lung tissue in people with asthma, for instance, develops with different cell dynamics than healthy tissue—differences that emerge early and compound over time.

From Fruit Flies to Human Diagnosis

Capturing those subtle developmental variations could improve early detection or drug screening. Cancer tissues also show altered architecture long before tumors form. If comparable imaging data existed for human tissue development, the same predictive framework could potentially flag disease trajectories at their origin point.

The main barrier isn’t the modeling approach, it’s data availability. High-quality, whole-tissue videos at single-cell resolution remain rare and difficult to generate for complex organisms. Light sheet microscopy can capture fruit fly embryos completely, but scaling that capability to mammalian systems poses technical challenges that haven’t been solved.

From a computational standpoint, the researchers say the method is ready. The dual-graph architecture can handle the complexity; what’s missing is sufficient training material. As imaging technology advances and more detailed developmental videos become available, tools like MultiCell could do for living tissues what deep learning has done for protein structures: turn biological motion from observation into prediction.

For now, the system offers something more modest but still valuable—a way to test hypotheses about how local cell interactions create global patterns. By accurately modeling one hour of one species’ development, the team has shown that predicting cellular choreography isn’t just theoretically possible. It’s achievable, testable, and potentially scalable to systems that matter for human health.

Nature Methods: 10.1038/s41592-025-02983-x

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.