Four people with severe paralysis have successfully controlled a computer using only their silent inner voice, marking a new chapter in brain-computer interface technology. The system can decode imagined sentences from a vocabulary of 125,000 words with up to 74% accuracy, according to research published in Cell.

Unlike previous brain interfaces that required users to physically attempt speaking, a tiring process for paralyzed patients, this technology reads the brain’s electrical patterns during silent thought. “If you just have to think about speech instead of actually trying to speak, it’s potentially easier and faster for people,” explains Benyamin Meschede-Krasa, co-first author of the Stanford University study.

How Silent Speech Works in the Brain

The research team implanted hair-thin electrodes into the motor cortex of participants with amyotrophic lateral sclerosis (ALS) or brainstem stroke. What they discovered challenges conventional thinking about how the brain processes speech.

When people imagine speaking, their motor cortex—the brain region controlling movement—shows remarkably similar patterns to actual speech attempts. The key difference? Inner speech produces weaker brain signals, like turning down the volume on neural activity without changing the underlying tune.

“This is the first time we’ve managed to understand what brain activity looks like when you just think about speaking,” says lead author Erin Kunz.

The finding suggests our brains use the same neural highways for both silent and spoken words, just at different intensities.

Beyond Intentional Communication

Perhaps most intriguingly, the system occasionally picked up unintended inner speech. During counting exercises, participants were not told to use silent words, yet the computer detected number sequences as people mentally tallied objects on screen. This hints at the possibility of decoding thought patterns more broadly, something that could reshape neural decoding research and human-computer interaction.

One participant demonstrated this phenomenon during a memory task involving arrow sequences. When instructed to remember three directional arrows, brain activity suggested they were silently rehearsing “up right up” or similar verbal descriptions. But when shown simple line drawings instead, this verbal activity disappeared—indicating the brain chooses different memory strategies depending on the task.

Privacy Safeguards Built In

Recognizing potential privacy issues, researchers developed multiple protection systems. The most elegant involves a mental password: users think “chitty chitty bang bang” to unlock the system, which recognized this trigger phrase with 98% accuracy.

The team also discovered a neural signature they call the “motor-intent dimension”—subtle brain patterns that distinguish between intended speech and private thoughts. This biological marker could help future devices ignore accidental mental chatter, addressing concerns highlighted by the UNESCO neurotechnology ethics guidelines.

Real-World Testing Shows Promise

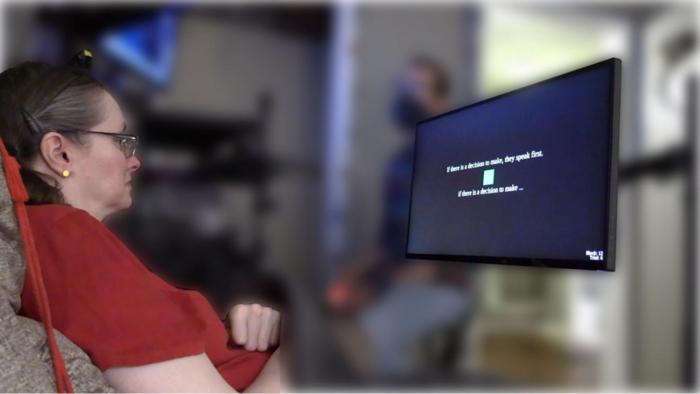

Three participants with severe speech difficulties tested the system in real-time scenarios. Unlike lab demonstrations using limited word lists, they attempted full sentences from natural conversations. Error rates ranged from 26% to 54%—not perfect, but better than many existing assistive communication devices that track eye movements.

Participants reported preferring the mental approach over attempting physical speech, which requires breath control and muscle coordination that can be exhausting for people with motor disabilities.

The Path Forward

Current limitations remain substantial. The system cannot reliably decode free-flowing thoughts or complex internal monologues. Most decoded private thoughts emerged as fragments rather than coherent sentences.

However, researchers remain optimistic about future developments. “The future of BCIs is bright,” notes senior author Frank Willett. “This work gives real hope that speech BCIs can one day restore communication that is as fluent, natural, and comfortable as conversational speech.”

The technology could particularly benefit people who retain mental clarity but have lost the ability to speak due to stroke, ALS, or spinal injuries. As brain-recording technology improves and artificial intelligence becomes more sophisticated, the boundary between thought and communication may continue to blur.

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.