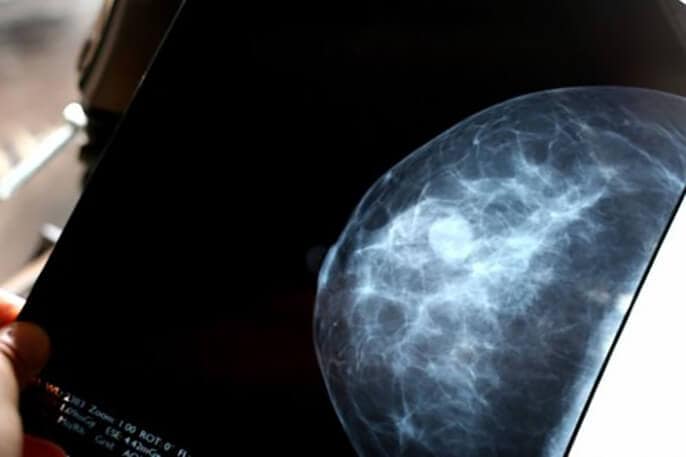

OVER 100,000 Swedish women rolled through mammography machines between 2021 and 2022, each one randomly assigned to have their scans read either by two radiologists working together or by artificial intelligence backing up a single human reader. Two years later, the women whose images got AI support had 12 per cent fewer aggressive cancers show up between their regular screening appointments – the first hard evidence that machine learning might spot tumours human eyes miss.

The MASAI trial results, published in The Lancet, settle a question that’s been nagging breast cancer researchers since AI systems started matching radiologists’ accuracy in lab tests. Yes, these algorithms can find more cancers during screening (29 per cent more in this study). But do they catch the dangerous ones early enough to matter, or just flag harmless abnormalities that would never have caused trouble? The answer appears to be the former.

“Our study is the first randomised controlled trial investigating the use of AI in breast cancer screening and the largest to date looking at AI use in cancer screening in general,” says Kristina Lång from Lund University, who led the trial. The AI-supported screening improved early detection of clinically relevant breast cancers, which led to fewer aggressive or advanced cancers diagnosed between screenings.

Interval cancers – those diagnosed after a negative mammogram but before the next scheduled screening – tend to be nastier than cancers caught during routine checks. They’re often larger, more likely to have spread to lymph nodes, and more frequently belong to aggressive molecular subtypes. Roughly 20 to 30 per cent of these could have been visible on the previous mammogram if someone had known where to look.

The Swedish trial used an AI system called Transpara, trained on over 200,000 mammograms from multiple countries and imaging equipment types. The software assigned each scan a risk score from 1 to 10 and either a single radiologist reviewed low-risk images (scores 1-9) or two radiologists tackled high-risk ones (score 10), with the AI highlighting suspicious regions for their consideration. Standard practice in European screening programmes involves two radiologists reading every mammogram independently.

What happened over the following two years tells you something about which cancers matter. The AI group had 1.55 interval cancers per 1,000 women screened, compared with 1.76 in the standard double-reading group (that’s the 12 per cent reduction, though the trial was designed only to show AI wasn’t worse, not that it was definitively better). More tellingly, the AI group had 16 per cent fewer invasive interval cancers, 21 per cent fewer large tumours over 20 millimetres, and 27 per cent fewer aggressive non-luminal A subtypes – the triple-negative, HER2-positive, and luminal B cancers that benefit most from early detection.

The sensitivity figures – the proportion of all cancers that screening actually catches – climbed from 73.8 per cent with standard double reading to 80.5 per cent with AI support. That improvement held across different age groups and breast densities, and for invasive cancers specifically. Specificity, meanwhile, stayed rock-solid at 98.5 per cent for both groups, meaning false alarms didn’t increase.

Here’s the bit that might actually get AI into clinics despite healthcare systems’ legendary resistance to change. The AI-supported workflow cut the number of mammogram readings nearly in half – from 109,692 to 61,248 across the trial – because low-risk scans only needed one radiologist instead of two. Consensus meetings, where radiologists hash out ambiguous cases together, happened at roughly the same rate (3.8 per cent versus 3.7 per cent).

“Our study does not support replacing healthcare professionals with AI,” emphasises Jessie Gommers, the trial’s first author from Radboud University Medical Centre. The AI-supported screening still requires at least one human radiologist to perform the screen reading, but with support from AI. The results potentially justify using AI to ease substantial pressure on radiologists’ workloads, enabling these experts to focus on other clinical tasks.

Sweden faces the same radiologist shortage as most wealthy countries – too few specialists, too many scans, waiting times creeping upward. If AI can maintain or improve cancer detection whilst halving the reading burden, that’s the sort of efficiency gain that gets hospital administrators interested. Whether it’s actually cost-effective depends on how much the software costs versus the value of catching more cancers early, and that analysis is still underway.

The trial had limitations worth noting. One country, four screening sites but one reading centre, one type of mammography machine, one AI system, experienced radiologists, and baseline recall rates already impressively low by international standards (around 2 per cent). Outcomes might differ elsewhere, particularly with less experienced readers or different screening setups. The researchers didn’t track race or ethnicity data, as Sweden doesn’t routinely collect this in screening programmes. And crucially, this covers just one screening round – nobody yet knows if the benefits persist or fade as radiologists and AI systems settle into long-term working relationships.

There’s also a nagging worry about overdiagnosis. The AI group detected more ductal carcinoma in-situ (DCIS, or pre-invasive breast changes) without a corresponding drop in interval DCIS diagnoses. Some of those extra detections were high-grade lesions likely to become invasive cancer, but others were low-grade findings that might never have caused problems. The same pattern appeared, more subtly, with low-grade luminal A invasive cancers – a 21 per cent detection increase without fewer interval cancers later. Subsequent screening rounds should clarify whether AI is genuinely catching dangerous cancers early or just shifting diagnoses forward in time without changing outcomes.

Lång is circumspect about rolling out the technology without more data. Introducing AI in healthcare must be done cautiously, she says, using tested tools and with continuous monitoring in place to ensure we have good data on how AI influences different regional and national screening programmes and how that might vary over time. Several screening programmes in Sweden and Denmark have already implemented AI systems, driven largely by workforce shortages. Other randomised trials are underway in Norway, Australia, and the UK.

The comparison with digital breast tomosynthesis (pseudo-3D mammography) is instructive. A Swedish trial at the same site as MASAI found similar sensitivity and interval cancer rates with tomosynthesis compared to AI-supported standard mammography, but at higher recall rates and with more resource-intensive imaging. AI-supported standard screening might offer similar benefits without the infrastructure investment – though AI-supported tomosynthesis could potentially do even better, if someone can make the economics work.

For now, the evidence suggests AI can efficiently improve breast cancer screening performance compared with standard practice. Further studies on future screening rounds and cost-effectiveness will help clarify the long-term balance of benefits and harms. If those continue to look favourable, there could be a strong case for implementing AI in population-based screening, especially as healthcare systems face persistent staff shortages.

Study link: https://www.thelancet.com/journals/lancet/article/PIIS0140-6736(25)02464-X/fulltext

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.