It looks like science fiction, but it is not. At UCLA, engineers have built a wearable brain-computer interface that uses artificial intelligence as a co-pilot to help people move robotic arms or computer cursors with their thoughts. In early tests, the system allowed even a paralyzed participant to perform tasks he could not complete unaided.

The study, published in Nature Machine Intelligence, marks a major step toward noninvasive devices that restore independence for those living with paralysis or neurological conditions.

A Copilot for the Brain

The system works by decoding electroencephalography (EEG) signals, the faint electrical patterns that ripple across the scalp when a person imagines moving. These signals are notoriously noisy. To boost accuracy, the UCLA team paired their decoder with an AI vision system that observes tasks in real time and infers what the user intends to do.

“By using artificial intelligence to complement brain-computer interface systems, we’re aiming for much less risky and invasive avenues,” said Jonathan Kao, associate professor of electrical and computer engineering at UCLA Samueli. “Ultimately, we want to develop AI-BCI systems that offer shared autonomy, allowing people with movement disorders, such as paralysis or ALS, to regain some independence for everyday tasks.”

Tasks Once Impossible

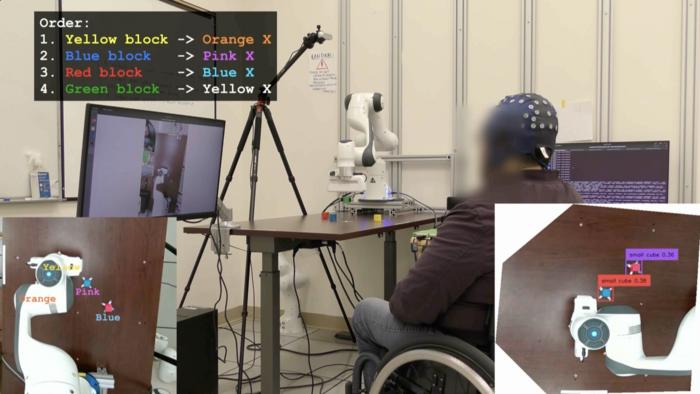

Four participants, three without motor impairments and one paralyzed from the waist down, tested the interface. Wearing EEG caps, they were asked to control a cursor on a computer screen and to manipulate a robotic arm in pick-and-place tasks. The AI copilot helped them navigate the cursor to targets and grasp blocks on a table.

Without AI assistance, the paralyzed participant could not complete the robotic arm task. With AI, he successfully moved four blocks into place in about six and a half minutes. A moment of quiet triumph in a lab setting, yet a glimpse of what daily independence might feel like again.

“Next steps for AI-BCI systems could include the development of more advanced co-pilots that move robotic arms with more speed and precision, and offer a deft touch that adapts to the object the user wants to grasp,” said co-lead author Johannes Lee, a UCLA doctoral candidate.

Shared Autonomy

The researchers call this approach “shared autonomy.” Instead of expecting the brain signals alone to fully direct each motion, the AI fills in the gaps, predicting intent and nudging the robotic arm or cursor toward the goal. Think of a driving instructor who lightly turns the wheel when you drift too far—guidance without taking over.

This is crucial because surgically implanted BCIs, though powerful, carry risks of infection and cost. Noninvasive systems are safer but have lagged in performance. UCLA’s hybrid system, blending EEG decoding with AI copilots, may close that gap.

The Human Stakes

For people with spinal cord injuries or ALS, even small gains in control can translate into monumental shifts in daily life. The ability to grasp a cup, select a button on a screen, or move an object across a table is not just a laboratory demonstration. It is autonomy reclaimed, dignity restored.

“AI copilots significantly increased performance in both computer and robotic arm tasks,” the authors wrote. “As AI copilots improve, BCIs designed with shared autonomy may achieve higher performance.”

What Comes Next

The team envisions copilots that adapt to more complex environments, grasp objects with finesse, and learn from larger training datasets. There are hurdles still—variability in EEG signals, the burden of training, the challenge of making systems robust in real-world settings. But the direction is clear. Noninvasive BCIs, once dismissed as too crude, may be on the cusp of becoming practical tools.

Takeaway

This is not just a story about electrodes and algorithms. It is a story about restoring the link between thought and action. A wearable device that listens to intent, and an AI that lends a hand, together point to a future where paralysis does not mean the end of movement. It means movement reimagined.

Journal: Nature Machine Intelligence. DOI: 10.1038/s42256-025-01090-y

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.