You say two words into a microphone: “autumn forest.” A few seconds later, the colors appear, on your actual face, projected there in real time, shifting across your cheeks and eyelids and lips while you check yourself in the mirror. No smearing, no testing, no scroll through a grid of swatches. Just the warmth of October light translated, somehow, into blush and shadow.

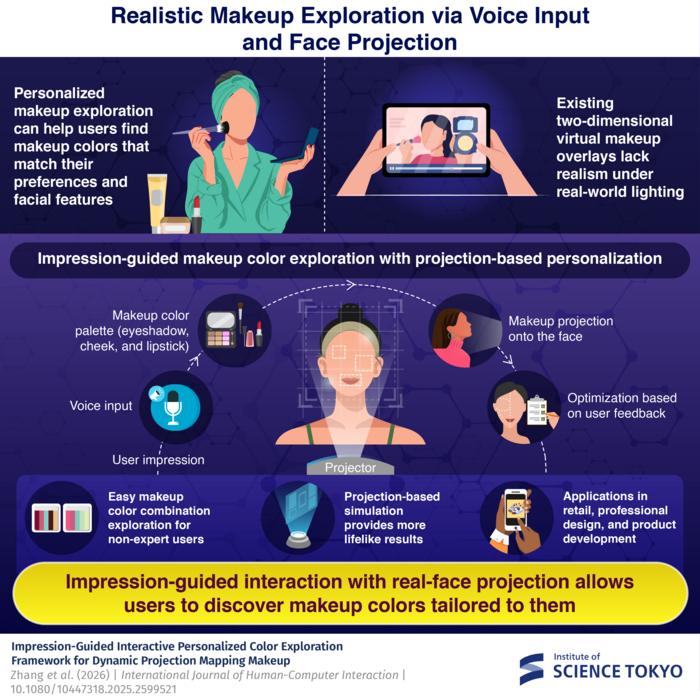

The system doing this was developed by researchers at the Institute of Science Tokyo, and it represents something of a rethink of how virtual makeup technology might actually work. Most apps in this space, the kind that let you swipe through shades before buying, overlay colors onto a two-dimensional image of your face on a phone screen. Convenient, yes, but the effect is a bit like painting onto a photograph: what you see is not quite how it would look on real skin, under real light.

The Tokyo team’s approach is different in a fairly fundamental way. Rather than projecting makeup onto a screen, they project it directly onto the user’s face using dynamic projection mapping, a technique that tracks facial features in real time at 1,000 frames per second and adjusts the projected image to match movements, tilts, small rotations of the head. The result is that the colors land on actual skin, with actual skin tone and texture doing the thing that skin tone and texture always does to color: transforming it, absorbing it, reflecting it back differently than expected.

That last point turns out to matter a lot. Anyone who has ever bought a lipstick that looked one shade in the store and another entirely on their face will understand the problem. Different skin tones interact with pigments in ways that no flat-screen overlay can reliably simulate. A projected crimson on pale skin reads differently than the same projection on a deeper complexion, not because the color changes, but because the surface absorbs and reflects differently. The Science Tokyo system handles this by building the interaction into the exploration process itself: you see what the color actually looks like on your face, and the system learns from what you choose.

The starting point is language. Users are encouraged to think in impressions rather than specifications, describing scenes or moods in natural phrases: “sakura in spring,” “night rose,” “gentle sunshine.” The system feeds this text through a generative image model, which produces a photorealistic scene that represents the described atmosphere, then extracts five dominant colors from that scene. These colors are then filtered through a dataset of 313 real cosmetic product colors collected from major international brands, ensuring that what gets projected is something you could actually buy, roughly speaking, rather than a blue-green that no lipstick has ever approached.

“Users can easily explore preferred makeup colors from a large number of combinations through interactive optimization using impression words and projection-based makeup,” says Yoshihiro Watanabe, associate professor at the Institute of Science Tokyo. “This can help non-expert users efficiently find satisfying results in the vast space of color combinations.”

The “vast space” is not an exaggeration. For three makeup components (eyeshadow, blush, and lipstick) the possible combinations within a standard color space form what the researchers describe as a nine-dimensional search problem. For someone who doesn’t think in hue, saturation, and lightness parameters, that is about as navigable as it sounds. The impression-based entry point sidesteps this by collapsing an enormous search space into a handful of projected options, which the user can then refine using keyboard inputs while looking in the mirror.

The refinement step uses Bayesian optimization, a technique borrowed from fields like lighting design and melody composition, to learn preferences iteratively. After each round of options, the algorithm narrows in on what seems to resonate, balancing exploration of new possibilities against exploitation of known preferences. In user studies with 15 participants, the impression-guided system consistently outperformed manual color-slider adjustment on satisfaction, ease of use, and what the researchers measured as “stimulation” and “originality.” Perhaps more interestingly, several participants reported discovering color combinations they would never have chosen on their own, not because the system found something better by the researchers’ reckoning, but because the freeform impressionistic input led somewhere the person hadn’t thought to go.

There are real limitations to the current setup, and the researchers are upfront about them. The high-speed projector and camera required for real-time facial tracking are expensive; this is not yet consumer hardware. Some participants felt that once they’d narrowed in on an overall combination they liked, they wanted to adjust a single component independently, which the system doesn’t easily allow. And certain prompts, particularly more abstract impressionistic ones like “gentle sunshine,” proved genuinely difficult to translate into consistent color choices, because people associate the same atmospheric description with quite different palettes.

“With such a system, users can simply describe their desired impression of makeup colors in natural language and observe the effects in the mirror,” Watanabe says. The gap between that description and what’s actually possible, practically speaking, is still considerable. Cosmetics professionals interviewed for the research noted the system’s potential for early-stage product development, for sketching out color ranges from abstract design briefs, but also flagged the accuracy gap between its current output and what professional workflows require.

What makes this more than a clever interface experiment is what it says about personalization in an era when recommendation systems and virtual try-on tools are proliferating rapidly. The problem with most of these tools is that they recommend from existing categories, suggesting options based on trends or facial feature analysis, rather than following an open-ended creative impulse wherever it leads. An impression-guided system makes room for something different: the serendipitous find, the color combination that only emerges because the starting point was “October light in a forest” rather than “warm auburn tones.” The researchers suggest the approach could extend beyond color, eventually incorporating makeup textures, shapes, even other sensory inputs like music or movement, as ways of translating atmosphere into appearance.

Whether the technology ever reaches a price point that makes it viable outside research labs or high-end retail settings remains to be seen. But the question it poses is the more durable one: how much of what we want from beauty technology is accuracy, and how much is the ability to follow a feeling somewhere we couldn’t have anticipated?

DOI / Source: https://doi.org/10.1080/10447318.2025.2599521

Frequently Asked Questions

Projected light interacts with whatever surface it hits, and skin is not a neutral canvas. Different skin tones and undertones absorb and reflect light differently, which means the same projected color can appear warmer, cooler, lighter, or deeper on different people. This is precisely the problem the Tokyo system is designed to handle: by projecting onto your actual face and having you evaluate what you see in a mirror rather than on a screen, the feedback loop accounts for your particular skin in a way that digital overlays cannot.

Standard virtual try-on tools overlay a color effect onto a two-dimensional image of your face on a phone screen, and while they have improved considerably, they remain screen-based simulations. The Tokyo system projects light directly onto the user’s physical face, which means the color is responding to real skin texture and tone in real time. The impression-based input is also new: rather than selecting from a catalogue, you describe a mood or scene and the system generates options from that.

That was partly the point. User studies found the system was particularly effective for people with little makeup experience, who typically find both physical try-on and digital swatch-browsing overwhelming. The impression input sidesteps the need to already know color theory or have a preference vocabulary, letting users navigate via atmosphere and feeling rather than technical parameters.

Mainly cost and infrastructure. The system requires a high-speed projector capable of 1,000 frames per second, a high-speed camera, and a controlled lighting environment, none of which are cheap or compact. The researchers acknowledge the hardware is currently research-grade and that developing consumer-ready projection devices at accessible price points is one of the main challenges for wider adoption.

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.

Key Takeaways

- The Tokyo system projects virtual makeup directly onto users’ faces in real time, adapting to skin tone and texture.

- Users input natural language impressions like ‘autumn forest’ to discover colors, which the system generates and filters based on real products.

- This impression-guided system simplifies the complex options in makeup, making it easier for non-experts to find suitable colors and combinations.

- Though promising, the technology requires expensive equipment and isn’t yet viable for general consumer use.

- The approach could redefine how makeup technology addresses personal preferences beyond just accuracy.