IT TAKES just a few clicks in Photoshop to do it. You take a life-like humanoid, find the area around the sockets, and—snip—the eyes are gone. In their place is a smooth, plasticky void. It’s a minor aesthetic tweak, but it changes everything.

We’ve long suspected that “eyes are the windows to the soul,” a proverb often (and perhaps wrongly) pinned on Shakespeare. But as we start to live alongside machines that look increasingly like us, this bit of folk wisdom is getting a rigorous scientific workout. Jari Hietanen and his team at Tampere University in Finland wanted to know: if you give a robot eyes, do we suddenly start believing there’s “someone” home?.

“This is significant,” Hietanen reckons, “because the perception of a mind influences empathy, willingness to cooperate and even how people treat technology ethically”.

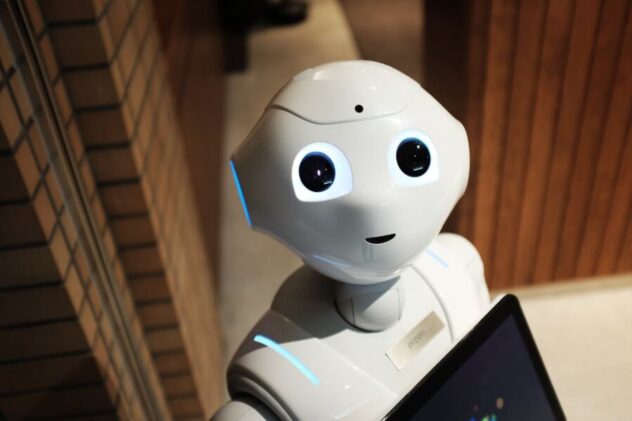

The team used an AI image generator to whip up two dozen high-resolution robots. They were varied—some looked like children, others like adults. Some had eyes embedded in their “skulls,” while others displayed them on digital face-screens. Then came the Photoshop surgery to create “eyeless” versions of the exact same machines.

When 200 volunteers were asked to rate these bots, the results were remarkably consistent. Robots with eyes were judged to have more “agency”—the ability to think, plan, and evaluate consequences—and more “experience,” meaning the capacity to feel hunger, pain, or joy.

It wasn’t just a conscious bias, either. In a second experiment using the Implicit Association Test (IAT), the researchers found that this “mind perception” kicks in at a preconscious level. Our brains seem to be making the “eyes equals mind” calculation before we’ve even finished registering the image.

Why so? From an evolutionary perspective, it makes sense. We’re hardwired to track gaze because it signals where a predator is looking or what a tribe member is thinking. But in the world of robotics, this instinct is a double-edged sword. Some AI ethicists have already “explicitly warned against adding eyes” to robots. They fear it’s a form of deception—a way to trick us into feeling for a machine that is, underneath the shell, just a bundle of wires and code.

It is a design choice with real-world stakes. If a care robot has eyes, an elderly patient might feel a deeper connection to it. If a delivery bot doesn’t, we might be more likely to kick it out of the way on a crowded pavement.

Hietanen’s study shows that eyes are far more than just a bit of polish. They are the toggle switch for our empathy. As we shell out for more of these machines, we’ll have to decide: do we want to look our technology in the eye, or would we rather stay in the dark?

Study link: https://www.sciencedirect.com/science/article/pii/S1053810025001564

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.