Key Takeaways

- Optical diffractive neural networks (ODNN) use structured diffraction to perform computations at near-light speed without consuming electricity, but they suffer from manufacturing errors.

- Vaccination training helps networks tolerate imperfections but doubles training time and doesn’t always predict real-world errors accurately.

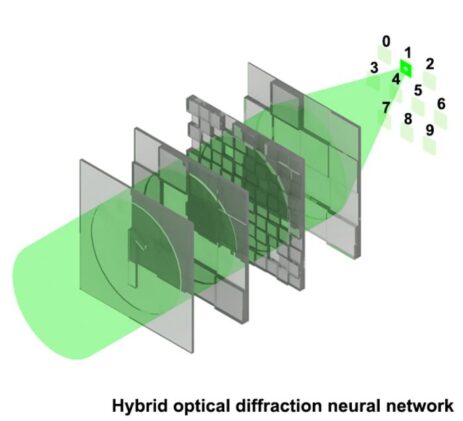

- The hybrid optical diffractive neural network (H-ODNN) designed by Ming Zhao’s team uses larger neurons to improve robustness without requiring additional training.

- Testing showed that H-ODNN outperformed vaccinated networks across multiple tasks while reducing processing time significantly, particularly for wavelength sorting.

- The hybrid structure may lower manufacturing costs by simplifying the etching process and improving production yield, making optical computing more feasible.

The laser pulse takes roughly a nanosecond to pass through the glass. That’s the part that works perfectly. What doesn’t work, what has reliably humbled optical computing researchers for the better part of a decade, is everything surrounding that nanosecond: the physical layers of etched material that shape the light, stacked with tolerances measured in hundreds of nanometers, assembled by hand in conditions that would make a semiconductor fab engineer wince. Slide one layer a fraction of a millimeter off-center, or stack it even slightly too close, and the network that classified digits flawlessly in simulation starts making errors at a rate that makes it useless. The physics of light, it turns out, is unforgiving.

Optical diffractive neural networks are, depending on your perspective, either the most elegant computing architecture ever devised or one of the most maddening engineering projects in modern photonics. They perform computations at near-light speed using no electricity for the computation itself, just structured diffraction, and they consume a tiny fraction of the power that conventional GPU clusters demand.

The basic idea is this: rather than running data through silicon transistors, an ODNN passes light through a series of carefully patterned layers, each one etched with thousands of microscopic features that bend and redirect wavefronts in precisely calculated ways, such that what emerges at the output encodes the answer to whatever the network was trained to solve. Recognition, image restoration, wavelength sorting: all of it performed, essentially, by physics. The catch is that those microscopic features, in networks designed to operate at visible-light wavelengths, must be fabricated at scales approaching 500 nanometers. Half a micrometer. That’s roughly a thousand times thinner than a human hair, and tolerances at that scale make the manufacturing challenge genuinely daunting.

The problem is scale. Networks designed for visible-light wavelengths require etched features roughly 500 nanometers across, and even microscopic shifts in how the layers are stacked, or tiny deviations in interlayer spacing, change how the light diffracts enough to degrade performance substantially. In simulation, layers are perfectly aligned; in a real lab, they never quite are. The smaller the features, the more sensitive the network is to these inevitable manufacturing imperfections.

Vaccination training deliberately introduces random misalignments into the training simulation, teaching the network to tolerate imperfection before it ever meets a real fabrication error. It helps, but it roughly doubles training time and works best only when the errors introduced during training match the errors encountered during manufacturing. For wavelength-sorting tasks especially, predicting that error distribution in advance is genuinely difficult, which is why vaccination sometimes underperforms in practice.

By using larger neurons in one of the diffractive layers. A neuron that spans more physical space is geometrically less sensitive to small positional shifts, because the same displacement represents a smaller fraction of its total area. The robustness is a consequence of the architecture itself rather than something the network has to learn, which is why no additional training is needed and why the training process is faster overall.

Possibly, and that may be its most significant practical implication. Larger neurons require fewer nanoscale features to be etched precisely, which relaxes the manufacturing tolerances and should improve production yield. In an industry where fabrication failures are a major cost driver, the economic case for a geometry that inherits robustness rather than requiring it to be trained in could be considerable.

The standard workaround has been something researchers call vaccination training: during the learning phase, you deliberately introduce random misalignments into the simulation, essentially teaching the network to expect imperfection. It helps, but it brings its own complications.

Vaccination training roughly doubles training time. And the errors introduced during training must match the errors encountered during manufacturing: get that prediction wrong, and the vaccination provides only partial protection. The wavelength-dependent nature of diffraction makes this especially tricky for networks sorting light by color. Engineers have been essentially guessing at the error distribution before the fab run happens.

A team led by Ming Zhao at Huazhong University of Science and Technology in China has proposed a different approach, one that sidesteps the vaccination problem entirely by rethinking the network’s physical architecture. Their hybrid optical diffractive neural network, or H-ODNN, doesn’t try to make a fragile network more robust through training. It builds robustness into the structure from the start.

The insight draws on something familiar from conventional deep learning: neural networks don’t require every layer to have the same number of neurons. A standard multi-layer perceptron might have 1024 units in one hidden layer and 256 in the next. Zhao’s team applied the same logic to the optical domain. In their hybrid design, one diffractive layer retains the full complement of fine-grained neurons (100 by 100), preserving the network’s capacity for complex computation, while another layer uses far larger neurons, perhaps only 10 by 10 across the same physical aperture. The larger neurons are intrinsically less sensitive to small displacements, because each one spans more physical space: when you shift such a layer by a given distance, the change in its phase distribution per unit of displacement is smaller, so the output light field deviates less from the ideal. Robustness becomes a geometric consequence of neuron size, not something learned during training.

The team tested 20 different network configurations across three tasks, and the results were, honestly, somewhat surprising. The best hybrid configuration, which placed the large-neuron layer first in the optical path, consistently matched or outperformed the vaccinated network across all misalignment conditions. It did this without any robustness training, which reduced per-epoch computation time by roughly 25% for digit recognition, 73% for image denoising, and 57% for wavelength classification. It also performed best of all on the wavelength sorting task, exactly where vaccination training struggles most.

There’s a practical manufacturing benefit too, perhaps even more consequential than the training efficiency gains. Larger neurons mean fewer nanoscale features to etch. Fewer features means a less demanding fabrication process and, in production, a meaningfully higher yield of working devices. The economic arithmetic of photonic computing may shift considerably if networks can be manufactured with relaxed tolerances and still perform reliably.

Whether this translates cleanly to more complex, multi-layer networks remains to be worked out. The H-ODNN studies used two-layer architectures, and interactions between multiple hybrid layers will need their own investigation. The team’s simulations also haven’t yet been validated against a physically fabricated device operating in a real cleanroom. That step will matter.

Still, the direction seems worth taking seriously. The field has spent years trying to make fragile high-performance networks more resilient through elaborate training protocols. The Zhao team’s contribution is, in a way, a structural argument: that robustness shouldn’t be trained in after the fact but designed into the geometry from the start. For anyone who has watched an elegant optical network fall apart the moment it left the simulation, the hybrid structure offers something vaccination never quite could: a reason to be less afraid of the assembly bench.

DOI / Source: https://doi.org/10.2738/foe.2026.0012

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.