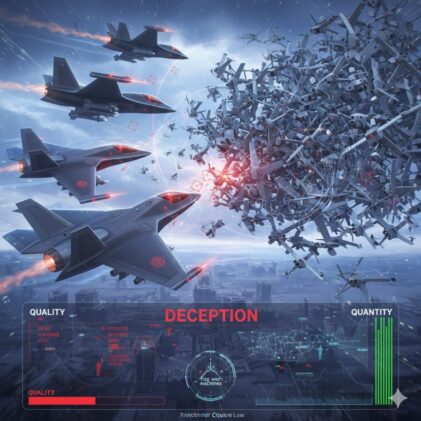

Picture two air forces squaring off. One fields 300 of the most lethal autonomous fighter jets ever built, each bristling with sensors and capable of killing nine enemy aircraft for every one it loses. The other sends up 950 cheaper, simpler drones. Individually, they’re outclassed. But according to a mathematical model that dates back to the first world war, the cheaper swarm wins. Every time.

That scenario isn’t science fiction. It is the central finding of a sweeping new analysis from the RAND Corporation, published last month, that asks what happens to warfare when artificial intelligence removes human brainpower as a bottleneck. The answer, the researchers argue, could upend decades of Western military strategy built around small numbers of extraordinarily expensive weapons.

The report’s seven authors spent months decomposing warfare into what they call “building block” competitions, four fundamental contests that shape how militaries fight: quantity versus quality, hiding versus finding, centralised versus decentralised command, and cyber offence versus cyber defence. Their conclusion is stark. Militaries that cling to existing approaches and simply bolt AI onto them will lose to those willing to rethink everything from the ground up. The US military, the authors warn, might need to change important aspects of how it has traditionally operated.

Start with quantity versus quality, which is arguably the most provocative of the four. For decades, the Pentagon has bet on quality. Fewer, better, more expensive. A single F-35 costs something like $80 million. The logic was that technological superiority would offset whatever numerical advantage an adversary might muster. AI, the RAND team argues, flips that calculus.

The key is what defence analysts now call “precise mass” and “affordable mass.” The first term, coined by political scientist Michael Horowitz, captures how cheap one-way attack drones can now deliver the kind of accuracy once reserved for exquisite cruise missiles. The second captures the cost dynamics: a robotic fighter with characteristics roughly similar to the experimental XQ-58 Valkyrie costs an estimated 4.7 times less than a crewed Chinese J-20 fifth-generation jet. You don’t need to match the enemy plane for plane. You need to flood the sky with things that are good enough.

To illustrate the point, the authors reach back to 1945 and an instructive mismatch. Germany’s Me 262, the world’s first operational jet fighter, had roughly a 9-to-1 lethality advantage over America’s propeller-driven P-51D Mustang. Extraordinary. Yet the Lanchester Square Law (a set of equations modelling attrition combat) shows that a threefold numerical superiority would have been sufficient to overcome even that devastating edge. The Americans could overwhelm the jets simply by showing up in larger numbers. And that historical ceiling on quality, the RAND analysts suggest, probably represents about as good as any side can get against a sophisticated peer adversary.

Now layer in AI. Autonomous aircraft don’t need pilot training. They don’t need ejection seats or life support or the ergonomic cockpit design that balloons weight and cost. They can be treated, the report notes, more like missiles, stored for years and tested occasionally. The maths shifts dramatically. Where once the side pursuing mass had to spend more overall to overcome a quality disadvantage, AI-enabled robotics could let you field the bigger force at lower total cost. That’s new. That changes everything, really.

But here’s where it gets properly interesting. Even if you can see the battlefield with AI-powered sensor fusion, processing satellite imagery and signals intelligence and radar returns faster than any human analyst, the RAND team argues the fog of war isn’t going away. It might actually get thicker.

The report introduces a concept it calls “fog of war machines,” AI systems designed not to find the enemy but to hide from them. Think of it as battle-management software for deception: orchestrating swarms of autonomous decoys (physical and electronic), timing troop movements to coincide with satellite blind spots, generating fake radio traffic, and selectively revealing real forces in ways calculated to increase confusion. The goal isn’t necessarily to make your tanks invisible. It’s to create what amounts to a needle-in-the-haystack problem so overwhelming that the finder’s AI chokes on ambiguity.

There’s a mathematical reason this works. Information fusion, the process of combining data from multiple sensors into a coherent picture, turns out to be what computer scientists call NP-hard. No amount of processing power guarantees you’ll solve it fast enough to matter. Even a superintelligent AI, the authors note, cannot analyse information it doesn’t have or overcome fundamental limits on reasoning under uncertainty. A clever hider exploiting those limits with cheap decoys and electronic trickery can keep a much better-resourced finder guessing.

The history of military deception has been haphazard, the report observes, with famous successes often depending on talented amateurs. D-Day’s phantom armies and Operation Mincemeat spring to mind. AI offers the chance to systematise all of that, to treat confusion as a constrained optimisation problem and run it continuously.

On the question of command structures, the analysis is perhaps less dramatic but no less important. You might expect that AI would push militaries towards either extreme centralisation (one brilliant AI general directing everything) or radical decentralisation (autonomous swarms making their own decisions). Neither happens. The report argues that mission command, the existing hybrid where senior leaders set objectives but local units decide how to achieve them, persists because the fundamental constraint isn’t cognitive capacity. It’s information. Forward units know things that headquarters doesn’t, and vice versa. AI makes both levels more capable but doesn’t eliminate that asymmetry.

The cyber chapter offers a rare note of optimism. Today, attackers have a structural advantage: they need to find one vulnerability in millions of lines of code, while defenders must protect everything. AI could begin to reverse that imbalance, not by building better firewalls but by writing better code in the first place. If AI tools can dramatically reduce the number of vulnerabilities before software is ever deployed, the exploitable attack surface shrinks. The defence benefits disproportionately. Though there’s a catch (there’s always a catch): the AI systems themselves become part of the attack surface. An adversary who compromises your AI security monitor has, in effect, been handed the keys to the entire network.

What ties all four competitions together is a message that will be uncomfortable in Washington. Exploiting AI’s military potential, the authors write, will be as much an organisational challenge as a technological one. The Pentagon can’t just buy better drones and call it a revolution. It needs to accept that some of those drones will be lost, resist the urge to gold-plate every robotic platform with exquisite specifications, invest seriously in deception rather than treating it as an afterthought, and fundamentally rethink what its force structure looks like. The advantage, the report concludes, might go to whichever side best executes the transition, not whichever side builds the cleverest algorithm.

And that is far from guaranteed to be the United States.

Study link: https://www.rand.org/pubs/research_reports/RRA4316-1.html

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.