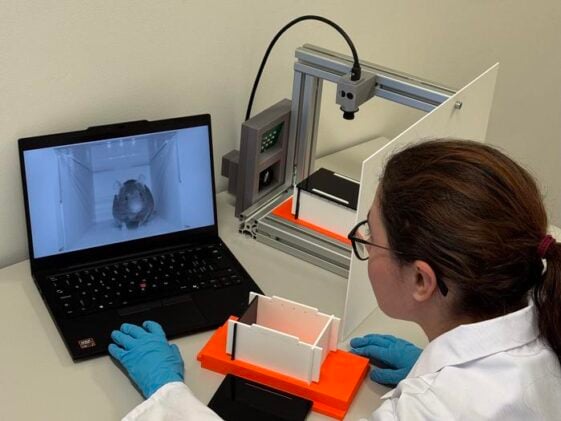

The box looks, at first glance, like something from a children’s classroom. White plastic, bright orange floor, compact enough to sit on a lab bench without fuss. But the mice placed inside it aren’t there to play. They’ve come, in a sense, to have their faces read. Two cameras peer through black acrylic that is transparent only to infrared light, recording every whisker twitch and orbital tightening in a darkness the animal finds genuinely calming. Within ten minutes, an algorithm has scored their grimace, compared it to thousands of expert-annotated examples, and returned a pain assessment that, in trials, matched trained human raters almost perfectly.

The system is called GrimACE, developed by Oliver Sturman and colleagues at ETH Zurich’s 3R Hub, and it addresses something researchers have struggled with for decades: you can’t ask a mouse whether it hurts.

Pain monitoring in laboratory rodents matters enormously, both ethically and scientifically. Undetected discomfort isn’t just a welfare problem. When an animal is in pain, its physiology changes in ways that can quietly distort experimental results, undermining reproducibility across entire research programmes. The gold standard has long been cage-side assessment: a trained experimenter peering at a mouse and consulting a pictorial chart, rating features like orbital tightening (a narrowing of the eyes), nose bulge, cheek bulge, ear position, and whisker direction on a scale of zero to two. The Mouse Grimace Scale, as it’s known, was developed around 2010 and has become something of a benchmark. The problem is that it’s slow, effortful, and deeply subjective. And there’s another wrinkle: prey animals may actively suppress obvious signs of pain when they sense a predator is watching. Which, from a mouse’s point of view, a looming human scientist very much is.

The GrimACE sidesteps this rather neatly. Closed in darkness with adequate ventilation and tiny air holes, mice in the monitoring arena behave naturally. Some sniff around. Some fall asleep. None of them know they’re being watched.

To test whether the system actually works, the ETH Zurich team put it through what amounts to a live clinical trial, assessing postoperative pain in mice following brain surgery. Craniotomies, it turns out, are one of the trickier scenarios for pain detection: the pain is moderate and resolves within roughly 48 hours, ordinary cage-side observation almost never picks it up, and the presence of head implants can confuse any camera-based system trying to read facial features. The researchers compared two standard analgesia regimes, a single dose of the anti-inflammatory meloxicam versus a three-dose combination of meloxicam and the opioid buprenorphine, recording GrimACE footage at multiple time points before and after surgery. One expert scorer, who had been rating grimace images for years, manually assessed thousands of frames. The automated system’s scores correlated with the expert’s at a Pearson’s r of 0.87. That’s a remarkably high figure for something as inherently fuzzy as pain assessment.

What happened next was, in its own way, more illuminating. Sturman’s team also asked three different human raters to assess the same images, including the expert and two trainees. Their scores diverged considerably. Not because anyone was careless. “We secretly gave all three raters the same images to assess, to check whether their own scores were consistent,” says Sturman. Each person turned out to be highly self-consistent; the problem was that they were consistently different from each other. One rater reliably scored high. Another reliably scored low. A third landed in the middle. “This is where we see the strength of the computer as it delivers standardised results,” Sturman says.

The stakes here aren’t trivial. As Sturman puts it: “If someone always assesses that an animal is not in pain, animals will suffer needlessly. And if someone always gives overly high scores, there is a risk that experiments are abandoned unnecessarily.” Uniform assessment, across labs and across continents, is the only way to guarantee that the pain relief an animal actually needs is the pain relief it gets.

Beyond the grimace scoring, GrimACE does something the original Mouse Grimace Scale cannot: it watches the whole body. A second camera mounted above the arena tracks key points across the mouse’s skeleton in real time, feeding into a behavioural analysis pipeline called BehaviorFlow. This proved useful in unexpected ways. The opioid group, the mice receiving buprenorphine alongside meloxicam, moved significantly more than the anti-inflammatory-only group at the four-hour post-surgery mark. That’s a known side effect of acute opioid treatment in rodents, hyperactivity rather than sedation, but it’s the kind of thing that slips past routine cage-side checks. The buprenorphine group also lost more body weight in the days following surgery, though the drop stayed well below the threshold that would require intervention. Taken together, the data suggested that adding opioids to an NSAID regime provides very little additional pain relief after craniotomy while introducing genuine recovery-phase costs. Meloxicam alone, it seems, is broadly adequate. That’s a clinically useful conclusion, and one that emerged directly from the behavioural depth the system can provide.

The hardware, which Sturman compares to a passport photo booth (“these machines are always built the same: a stool that is positioned a fixed distance from the camera, a white background and a dark curtain”), is deliberately low-tech and standardisable. Aluminium frame, 3D-printed components, two Basler cameras, an infrared lamp, a laptop with a minimum of an NVIDIA 4070 GPU. The acrylic arena slots into a fixed position within the frame, so every recording is geometrically identical regardless of who assembled it. “What was missing was a complete, standardised, end-to-end system,” says Sturman. The GrimACE is being released as an open-source kit; all hardware files, software, and training data are available via GitHub and Zenodo. His aim is for the kit to be assembled and used in a standardised way by as many researchers as possible, so that the resulting data are actually comparable across institutions. The more labs contribute recordings, the better the underlying machine learning models become. “The more people that use GrimACE, the less bias there will be.”

There are limits. Current models have been validated only on male C57BL/6 mice, the workhorse strain of neuroscience, and mostly at moderate pain levels. White mice against a white background might need higher-contrast materials. The system doesn’t yet live inside the home cage, which would be the less intrusive approach, though the researchers see that as a future direction. And the algorithm’s lack of hard-coded facial biases means it can, in principle, pick up non-MGS features like head implants or wet fur around the face; more training data would sharpen its ability to ignore them.

Since launch, GrimACE has already drawn enquiries from labs in the US and UK. ETH Zurich has installed a unit in its phenomics centre. Whether the technology eventually becomes a commercial spin-off remains undecided. “Our primary concern is to improve animal welfare,” Sturman says. What seems clear is that standardised, objective pain monitoring at scale could shift the baseline for how labs operate globally, not by replacing human judgement entirely, but by making its most damaging inconsistencies visible.

The mouse in the dark box, it turns out, may soon have better advocates than any of us. Its grimace, scored by a network trained on thousands of expert-labelled frames, is harder to misread than a face observed under the fluorescent lights of a research facility, by a tired human, through the bars of a cage. That’s perhaps a slightly uncomfortable thought. But probably a necessary one.

Sturman et al., Lab Animal (2026). DOI: 10.1038/s41684-026-01695-9

Frequently Asked Questions

What is the Mouse Grimace Scale and why has it been hard to use reliably?

The Mouse Grimace Scale is a method for detecting pain in rodents by rating five facial features, including eye tightening, nose and cheek shape, ear position, and whisker orientation, on a zero-to-two scale. Researchers score these features by comparing a live or photographed mouse against reference images. The problem is that the method requires extensive training, takes considerable time, and produces scores that vary significantly between different raters even when each individual is consistent with themselves. GrimACE was designed specifically to address these limitations by standardising both the image acquisition and the scoring process.

How does GrimACE actually detect pain, and how accurate is it?

GrimACE uses two cameras inside a dark, infrared-lit box to capture front-view and top-view footage of a mouse without stressing it. Three neural networks then process the front-view footage: one selects high-quality frames, one locates the mouse’s face, and a third scores each of the five facial features. In validation studies, the automated scores matched those of an experienced expert rater with a correlation of 0.87, which is considered state-of-the-art for this type of assessment. The system also tracks whole-body movement and behaviour simultaneously.

Is adding opioids to standard painkillers actually better for mice after brain surgery?

The GrimACE data suggest probably not, at least not for the kind of moderate pain typical of craniotomy. Mice receiving both meloxicam and buprenorphine showed no better facial grimace scores than those receiving meloxicam alone, while showing pronounced hyperactivity and modest weight loss in the days after surgery, both consistent with known opioid side effects. This aligns with recent work from other groups suggesting that NSAID monotherapy is broadly adequate for managing post-craniotomy pain, and that opioids may impose recovery costs without clear benefit.

Could GrimACE be used for animals other than mice, or for chronic pain studies?

The current system has been validated only for laboratory mice, specifically the C57BL/6 strain, and primarily in scenarios involving moderate acute pain. The researchers note that grimace scales have been developed for other species, including rats and macaques, so in principle the approach could be extended with sufficient training data. For chronic pain models, the ability to detect subtle behavioural signatures beyond facial expressions, such as repetitive movements or postural changes, may prove particularly useful and is something the team sees as a future direction.

Is GrimACE freely available for other labs to use?

Yes. All hardware designs, software, and training data have been released as an open-source kit via GitHub and Zenodo, with the intention that any lab can build and use the system in a standardised way. The ETH Zurich 3R Hub can also assist with parts sourcing, assembly, and calibration. Because the machine learning models improve with more training data, wider adoption directly benefits the accuracy of every lab’s assessments.

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.