The brain scan takes three seconds to read. Not three minutes, not three hours: three seconds on a single graphics processing unit. Prima, an AI system developed at University of Michigan, examines the MRI study and delivers its verdict: 52 different neurological conditions checked, diagnostic probabilities calculated, priority level assigned. The system detected neurological conditions with up to 97.5 percent accuracy in testing and predicted how urgently patients required treatment.

For context: the average turnaround time for brain MRI results at the same institution climbed from roughly 18 hours in 2012 to over two days in 2024. Patients in sparsely populated rural areas face waits two to five times longer than urban patients. The global demand for MRI rises while physician workload intensifies and diagnostic errors increase. Approximately 100 million MRI studies happen annually worldwide, with 20 to 30 percent focused on neurological diseases, demand that surpasses available neuroradiology services.

Todd Hollon, a neurosurgeon at University of Michigan Health, led the research team that created Prima. “As the global demand for MRI rises and places significant strain our physicians and health systems, our AI model has potential to reduce burden by improving diagnosis and treatment with fast, accurate information,” Hollon said. The results appear in Nature Biomedical Engineering.

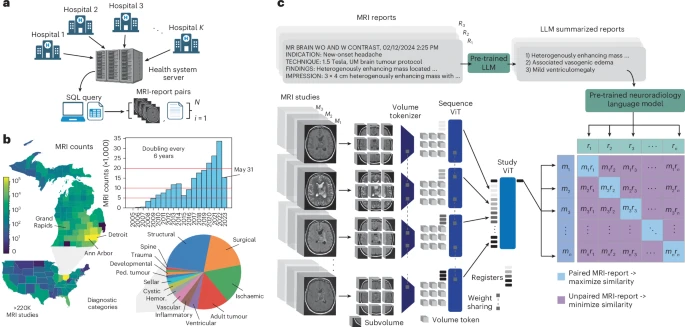

Prima differs from previous neuroimaging AI in scope and training approach. Past models relied on manually curated subsets of MRI data for specific tasks: detecting lesions, predicting dementia risk. Prima learned from everything: 221,147 MRI studies representing over two decades of clinical practice, encompassing 5.6 million MRI sequences from more than 170,000 patients. Every MRI taken at University of Michigan Health since radiology digitization began, regardless of protocol, sequence type, or image quality.

The system processes full clinical context. Radiologists don’t interpret scans in isolation; they consider patient symptoms, medical history, the reason imaging was ordered. Prima does likewise, combining MRI images with clinical information embedded from electronic medical records. “Prima works like a radiologist by integrating information regarding the patient’s medical history and imaging data to produce a comprehensive understanding of their health,” said Samir Harake, a data scientist in Hollon’s lab. “This enables better performance across a broad range of prediction tasks.” With clinical context included, Prima’s mean diagnostic area under the curve reached 92.0 percent, compared to 90.1 percent using MRI data alone.

The research team tested Prima in a year-long health system-wide study that included 29,431 MRI studies. Every patient who received a brain scan at University of Michigan Health between June 2023 and May 2024 was included, without exclusions. Across 52 radiologic diagnoses spanning major neurological disorders, Prima outperformed other state-of-the-art general and medical AI models. Detection accuracy ranged from 78.3 percent for arachnoid cysts to 99.7 percent for high-grade gliomas.

Prima assigns priority scores to studies, helping radiologists triage their worklists. The system’s normalized priority scores correlated strongly with three-tier ordinal priority scores, yielding a correlation coefficient of 0.69. It also recommends appropriate specialist referrals based on MRI features; patients with newly diagnosed multiple sclerosis get flagged for neuroimmunology, posterior fossa tumours for paediatric neurosurgery. Prima achieved average neurosurgery referral accuracy of 85.1 percent and neurology referral accuracy of 89.1 percent.

The system explains its reasoning. Using a technique called LIME (Local Interpretable Model-Agnostic Explanations), researchers can identify which parts of an MRI scan Prima focused on for each diagnosis. Testing on the BraTS dataset with expert-annotated tumour regions showed 98 percent accuracy: Prima’s top-three selected volume tokens fell within actual tumour boundaries. For diffuse low-grade gliomas, it highlighted FLAIR-hyperintense regions indicating tumour infiltration. For glioblastomas, contrast-enhancing regions. The patterns match what radiologists look for.

Clinical vignettes from the study demonstrate Prima’s capabilities over time. One patient had a diffuse astrocytoma that remained stable after subtotal resection but showed new contrast enhancement seven years later; biopsy confirmed malignant transformation. Prima’s prediction logit for high-grade glioma increased more than tenfold. Another patient presented with a rim-enhancing brain lesion; after surgical drainage the abscess resolved, leaving encephalomalacia. Prima’s brain abscess prediction logit dropped more than tenfold within a year. A paediatric patient with myelomeningocele and shunted hydrocephalus developed progressive altered mental status; Prima detected worsening ventriculomegaly, with prediction logits increasing more than fivefold over six months.

Algorithmic fairness testing revealed minimal performance disparities across sensitive groups. The researchers examined population density, geographic region, scan scheduling, race, sex, age, insurance status. Prima’s true positive rate (the percentage of actual cases correctly identified) remained consistent. This matters because medical AI systems frequently encode and amplify training data biases, showing worse performance for women, minorities, and lower socioeconomic groups. Prima’s sub-three-second turnaround time eliminates the systemic delays that disproportionately affect rural and underserved populations.

The technology raises questions about deployment and access. Prima will be released publicly under an MIT licence for investigational use, but raw patient imaging data cannot be shared due to privacy regulations. Training required massive computational resources. Performance improved consistently across 50 ExaFLOPs of computation, with no plateau observed. Even running the trained model requires at least one GPU. Whether hospitals in low-resource settings or low- and middle-income countries can access such systems remains unclear. Vikas Gulani, chair of radiology at University of Michigan Health, said his teams “have collaborated to develop a cutting-edge solution to this problem with tremendous, scalable potential.” Hollon calls Prima “ChatGPT for medical imaging”, a general-purpose assistant rather than a narrow diagnostic tool. The architectural framework could extend to other imaging modalities: mammograms, chest X-rays, ultrasounds. Each would require similar massive training datasets, but the core approach would remain the same. “Like the way AI tools can help draft an email or provide recommendations, Prima aims to be a co-pilot for interpreting medical imaging studies,” Hollon said.

Study link: https://www.nature.com/articles/s41551-025-01608-0

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.