Ten experienced hematologists sat down to examine blood cell images and determine which were real, which were AI-generated fakes. They got it right 52.3% of the time. A coin flip would have done just as well.

That result, surprising even to the researchers who conducted the test, reveals something unsettling about a new artificial intelligence system called CytoDiffusion: it has learned to see blood cells the way experts do, perhaps better. And unlike most medical AI tools, which confidently misdiagnose when they encounter something unfamiliar, this one knows when it’s guessing.

The system, developed by researchers from the University of Cambridge, University College London, and Queen Mary University of London, could reshape how diseases like leukemia get diagnosed. A single abnormal blast cell in a blood smear can signal acute leukemia, but finding it among thousands of normal cells requires expertise that takes years to develop. Even then, different doctors disagree on difficult cases.

“Humans can’t look at all the cells in a smear, it’s just not possible,” said Simon Deltadahl from Cambridge’s Department of Applied Mathematics and Theoretical Physics. “Our model can automate that process, triage the routine cases, and highlight anything unusual for human review.”

CytoDiffusion learned by studying over half a million microscope images from Addenbrooke’s Hospital in Cambridge, the largest dataset of its kind. But unlike typical AI models that simply memorize patterns to separate categories, this one uses generative modeling to grasp the full range of what healthy blood cells can look like. That broader understanding helps it flag the dangerous outliers.

The Uncertainty Advantage

What sets the system apart isn’t just accuracy. It’s the capacity to quantify its own doubt.

The researchers tested this using psychometric modeling, techniques borrowed from studying human perception. They found CytoDiffusion’s confidence scores aligned almost perfectly with the actual difficulty of classifying each image, behaving like an ideal observer detecting signal in noise. Human experts showed more erratic patterns, sometimes expressing high confidence on cases they got wrong.

“When we tested its accuracy, the system was slightly better than humans,” said Deltadahl. “But where it really stood out was in knowing when it was uncertain. Our model would never say it was certain and then be wrong, but that is something that humans sometimes do.”

This metacognitive awareness, knowing what you don’t know, matters enormously in clinical practice. A diagnostic tool that confidently misclassifies a leukemia patient’s blast cells as normal lymphocytes doesn’t just fail. It actively misleads. CytoDiffusion flags uncertain cases for human review instead.

Professor Parashkev Nachev from UCL framed the implications broadly. “This ‘metacognitive’ awareness, knowing what one does not know, is critical to clinical decision-making, and here we show machines may be better at it than we are.”

The system proved robust across wildly different imaging conditions. Models trained on one hospital’s images maintained high accuracy when tested on blood smears from different microscopes, cameras, and staining techniques. That versatility matters because hospitals use varied equipment, and an AI tool that only works with one manufacturer’s setup has limited practical value.

Finding Needles, Learning From Scraps

When detecting abnormal cells linked to leukemia, CytoDiffusion achieved sensitivity of 90.5% while maintaining 96.2% specificity, far surpassing existing systems. The difference stems from its generative approach: by modeling the complete distribution of healthy cell appearances, it naturally identifies anything that falls outside that space.

Perhaps more impressive was its data efficiency. Medical datasets are notoriously small and expensive to create because expert labeling takes time. In scenarios with just 10 to 50 training images per cell type, conditions where traditional neural networks collapse, CytoDiffusion consistently outperformed discriminative models. This suggests potential for identifying rare cell subtypes that appear infrequently in practice.

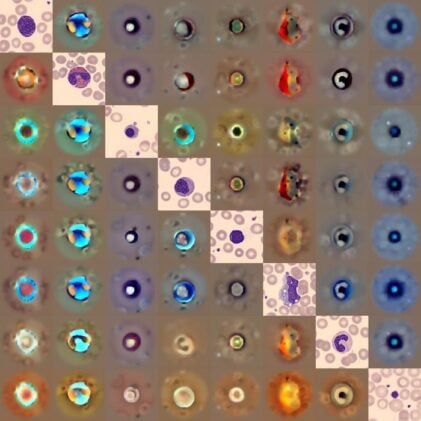

The tool also generates counterfactual heat maps, visual explanations showing which parts of a cell image would need to change for it to be classified differently. When analyzing an eosinophil, for instance, the model highlighted granularity patterns that distinguish it from a neutrophil, demonstrating that its decisions rest on legitimate morphological features rather than spurious correlations or imaging artifacts.

Dr. Suthesh Sivapalaratnam from Queen Mary University of London, who worked as a junior hematology doctor before turning to AI research, recalled the problem firsthand. “As I was analyzing them in the late hours, I became convinced AI would do a better job than me.”

The researchers are releasing their entire dataset, more than half a million peripheral blood smear images, as a public resource. In a field where proprietary data typically stays locked behind institutional walls, the move could accelerate development of competing and complementary tools.

Computational cost remains a constraint. CytoDiffusion requires an average of 1.8 seconds per image, scaling with the number of cell classes it must consider. That’s manageable for diagnostic screening but potentially limiting for high-throughput research applications. The researchers suggest code optimization, model distillation, and advancing hardware should address this over time.

The system isn’t meant to replace trained clinicians. It’s designed as a triage tool: handle routine cases automatically, flag ambiguous or abnormal ones for human expertise. That division of labor could improve efficiency in hospital labs without sacrificing diagnostic accuracy, though real-world testing across diverse patient populations remains necessary.

One unexplored frontier involves using the model’s learned representations to identify novel cell subtypes with clinical significance. CytoDiffusion has built a rich internal map of blood cell morphology that goes beyond the categories human experts currently use. Whether that map contains medically meaningful distinctions we’ve missed is an open question.

Nature Machine Intelligence: 10.1038/s42256-025-01122-7

ScienceBlog.com has no paywalls, no sponsored content, and no agenda beyond getting the science right. Every story here is written to inform, not to impress an advertiser or push a point of view.

Good science journalism takes time — reading the papers, checking the claims, finding researchers who can put findings in context. We do that work because we think it matters.

If you find this site useful, consider supporting it with a donation. Even a few dollars a month helps keep the coverage independent and free for everyone.